Feedback Evaluation

Overview

Feedback Evaluation tests how the assistant answers questions after a configuration change. It replays past conversations where users left feedback and regenerates answers with the current configuration. A similarity score measures how much new answers differ from the originals.

This feature is available for:

- Assistant owners — to evaluate their assistant's responses after changing its configuration.

When to Use It

Use Feedback Evaluation after changing the assistant configuration to understand the impact on answer quality:

- You updated the system prompt and want to know if responses improved.

- You switched to a different AI model and want to compare outputs.

- You adjusted retrieval settings and want to verify answers are still consistent.

- You want a baseline measurement of how reliably your assistant answers recurring questions.

How It Works

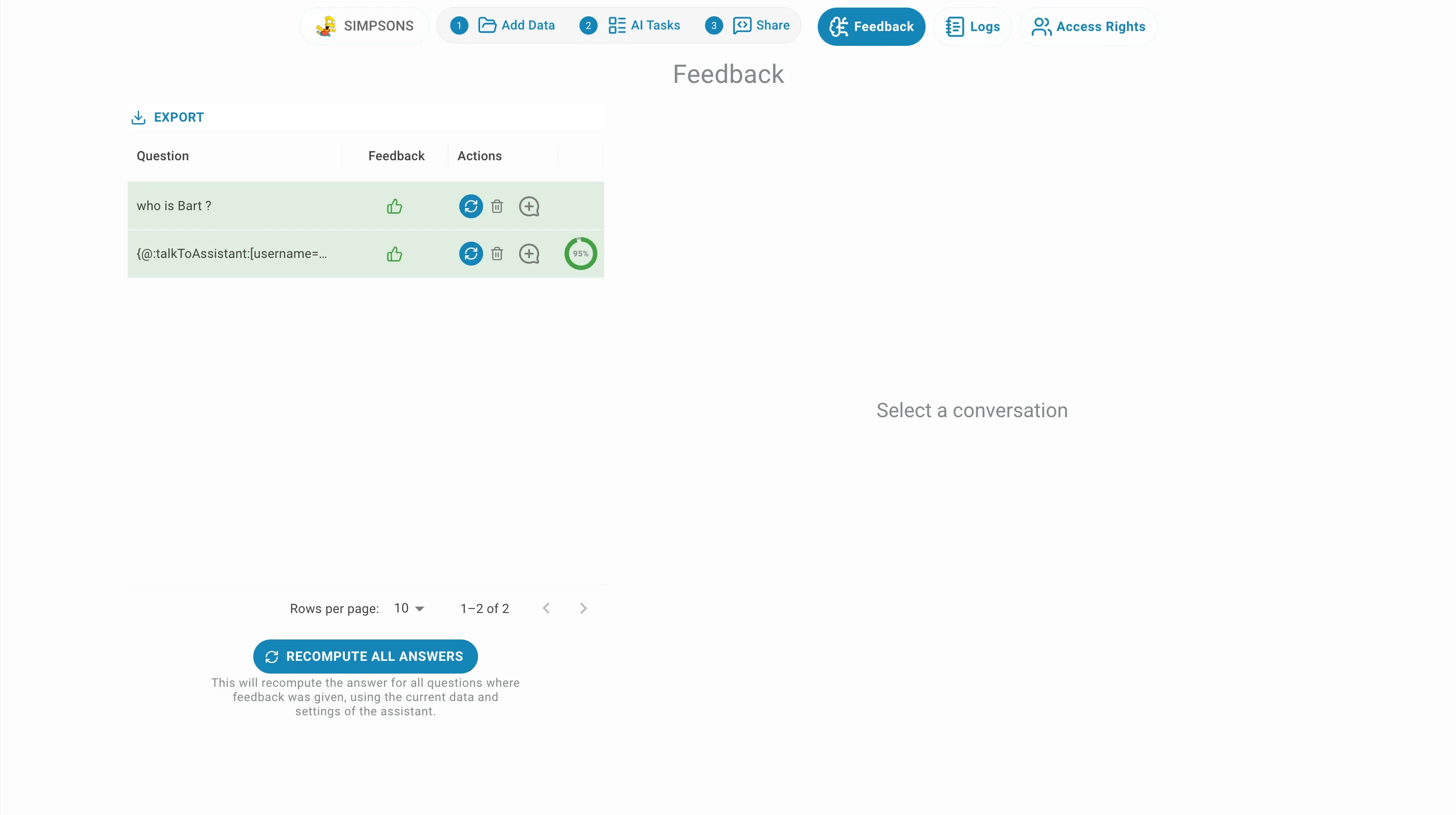

1. Trigger an evaluation — Inside the assistant or in the admin panel, in the feedback tab, you can trigger evaluation for one by clicking on or all the feedbacks by clicking on RECOMPUTE ALL ANSWERS.

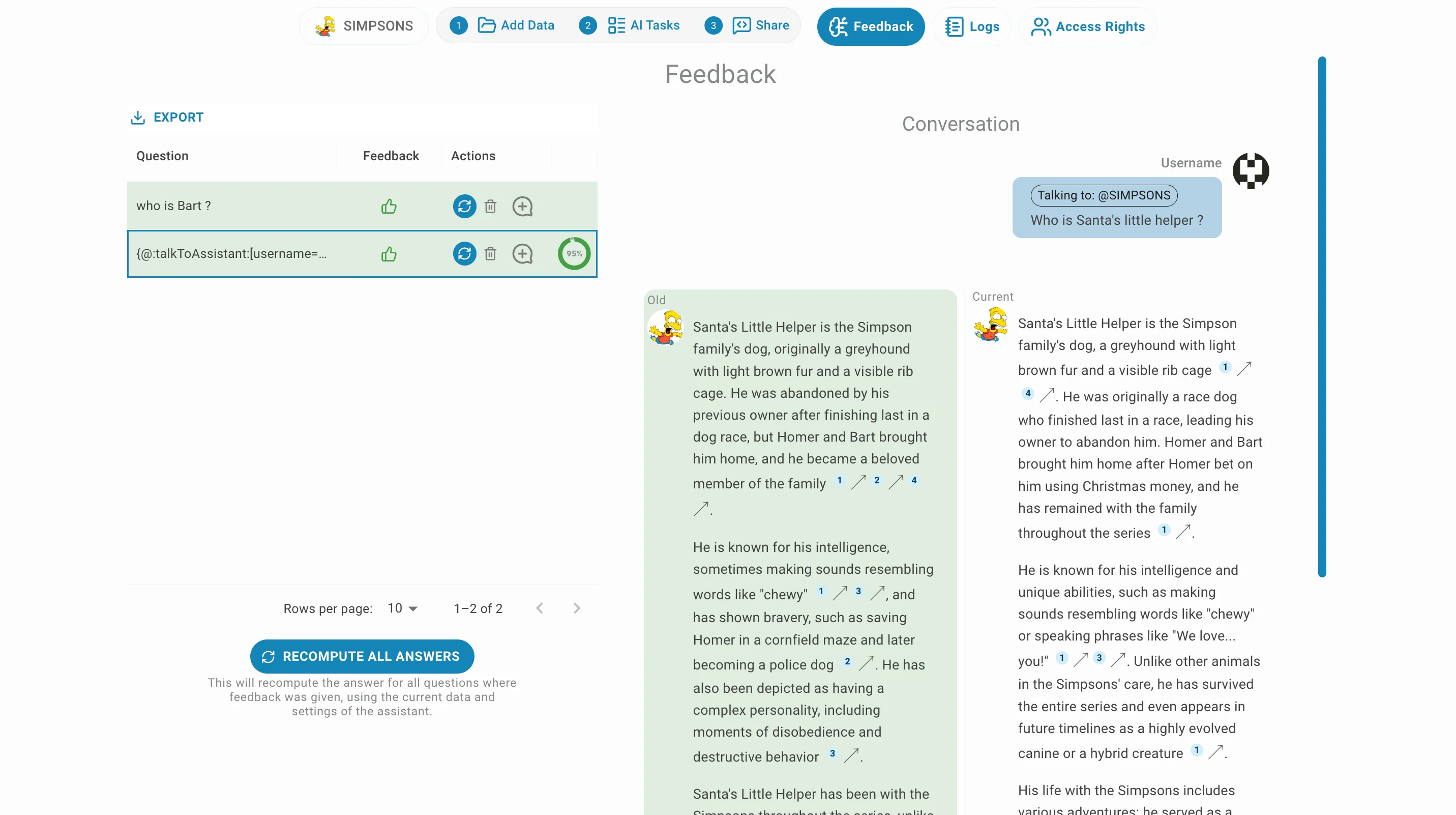

2. Answers are regenerated — the system replays each conversation from your feedback history, asking the assistant or chatbot the same questions again with the current (or provided) configuration.

3. Similarity is measured — for each positively-rated feedback, the regenerated answer is compared to the original. A score from 0 to 1 is assigned:

1.0— the new answer is essentially the same as the original.0.0— the new answer is completely different.- For negatively-rated feedbacks, answers are regenerated but no score is computed, since the original was already marked as wrong.

4. Results arrive in real time — scores appear as each feedback is processed, without waiting for all feedbacks to complete.

Evaluation History

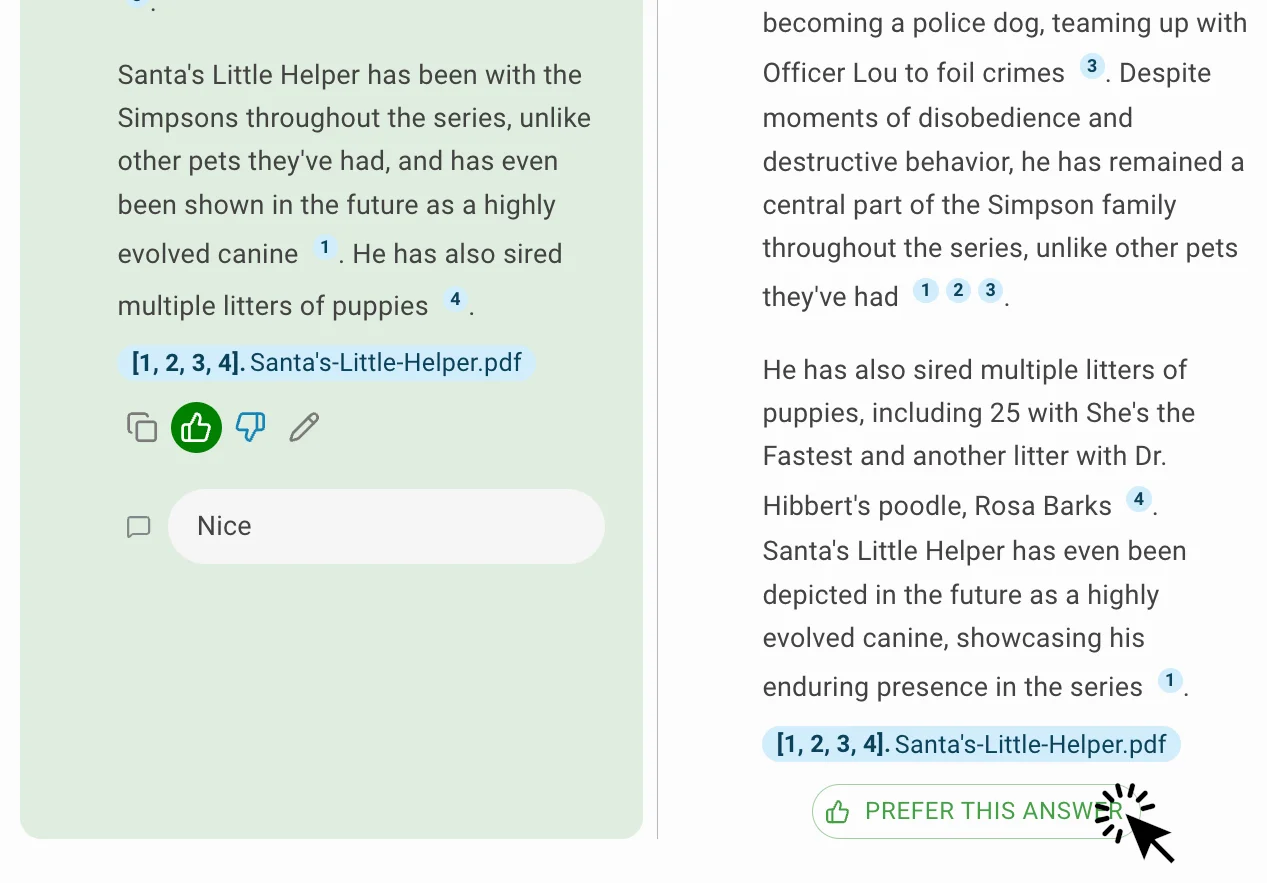

Once a new answer is generated, you can select it as the better answer by clicking on the THIS ANSWER IS BETTER button to be saved in the feedbacks. This is useful to direct the assistant or chatbot answers in the desired direction.

Similarity Score Explained

| Score | Meaning |

|---|---|

| 0.9 – 1.0 | Answers are nearly identical — the assistant is very consistent. |

| 0.6 – 0.9 | Answers share the same intent but may differ in wording or detail. |

| 0.3 – 0.6 | Noticeable differences — worth reviewing. |

| 0.0 – 0.3 | Answers are substantially different — the configuration change has a strong impact. |

| -1 | Evaluation could not be completed for this item (error). |

A lower score is not always bad — if the original answer was poor, a very different regenerated answer may actually be an improvement. Combine similarity scores with the original feedback ratings to interpret results correctly.

Admin & Evaluator Review

Admins and organisation evaluators can now review and evaluate user feedback directly from the admin panel.

1. Go to Organisation → Conversation Feedback in the admin panel.

2. Browse feedback entries — view ratings and comments left by users on assistant responses.

3. Trigger re-evaluation — click recompute to regenerate answers with the current configuration and compare results.