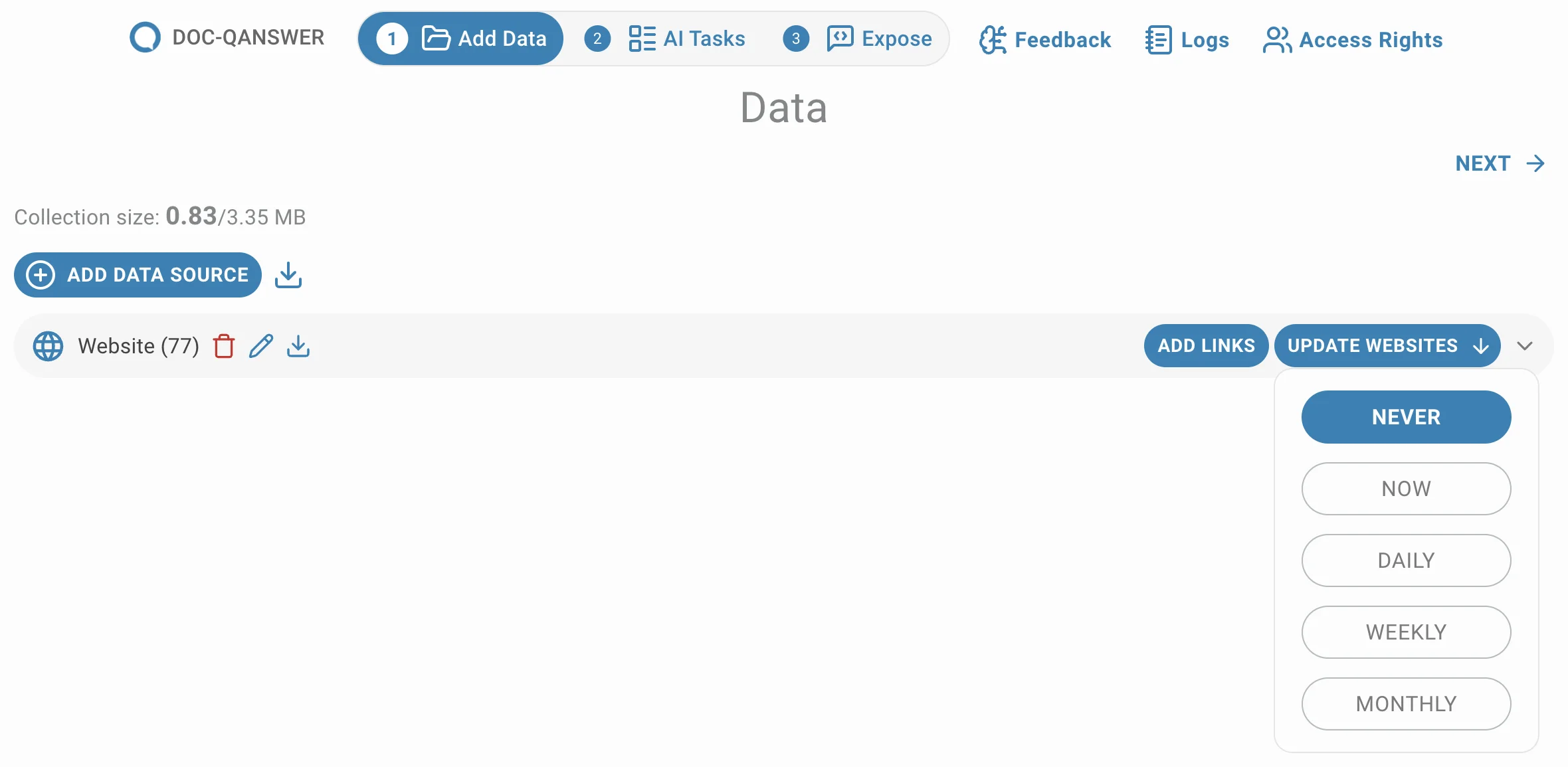

Chat

Video Tutorial

Chat

Watch on Tutorials page →

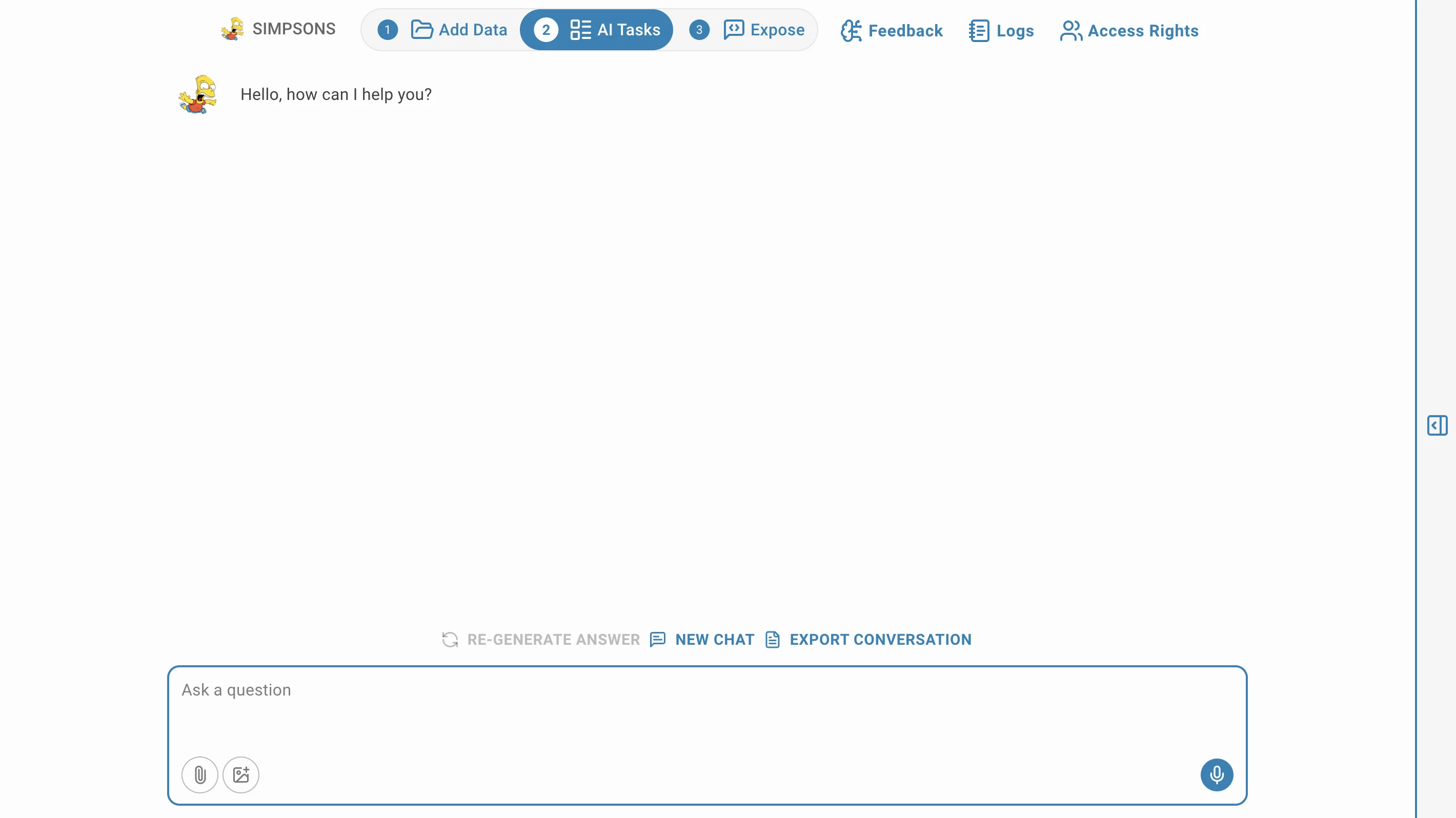

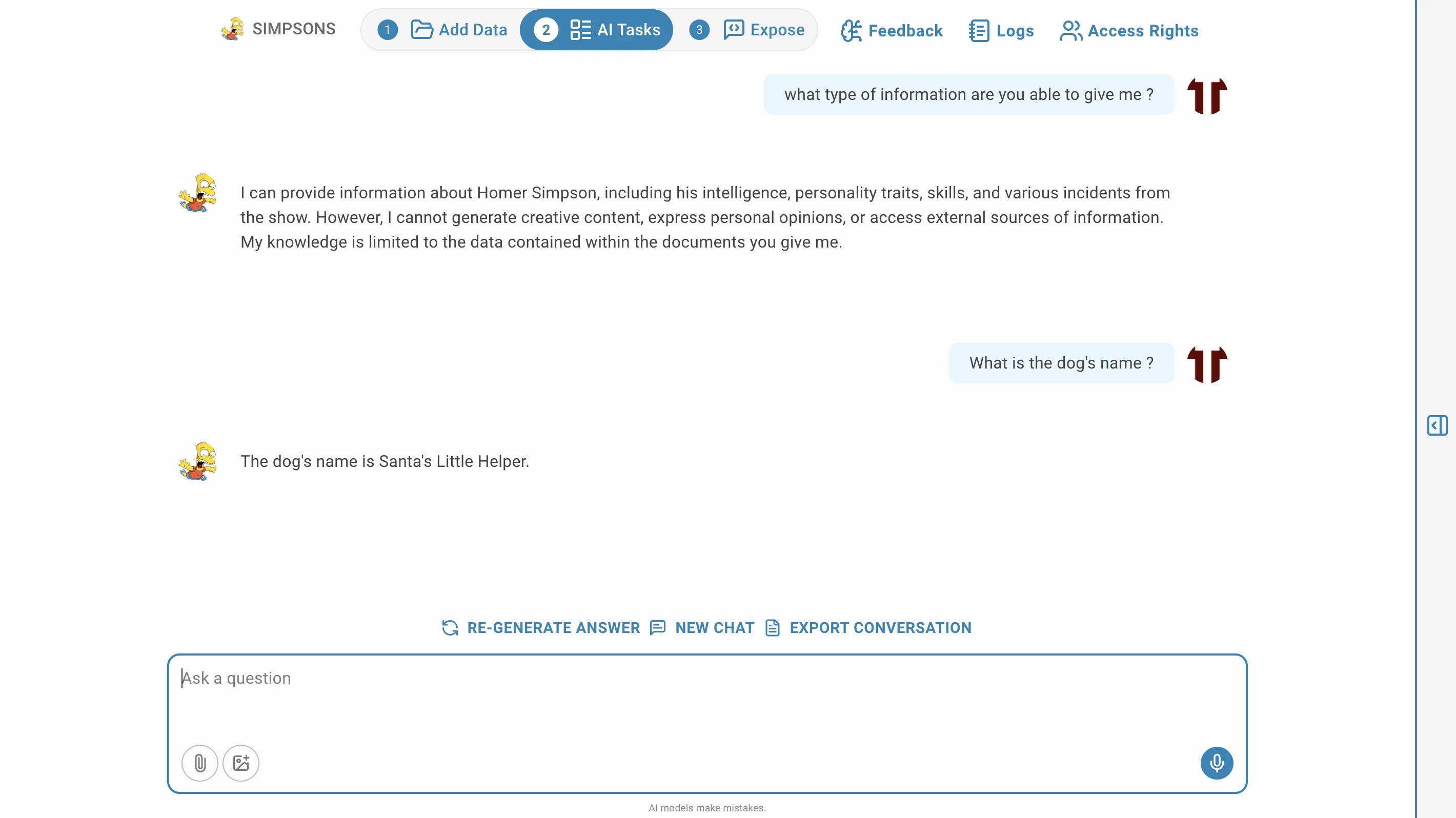

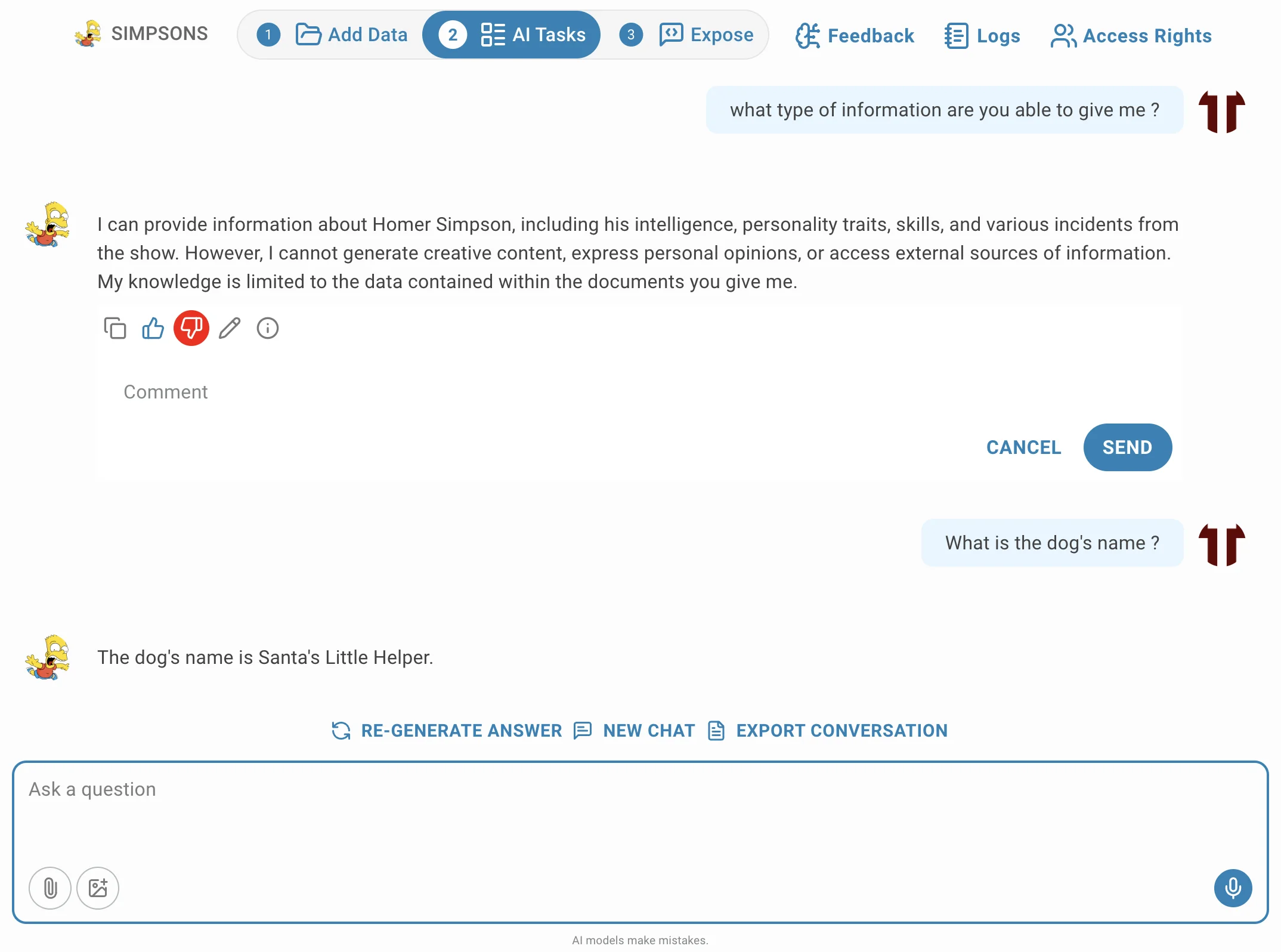

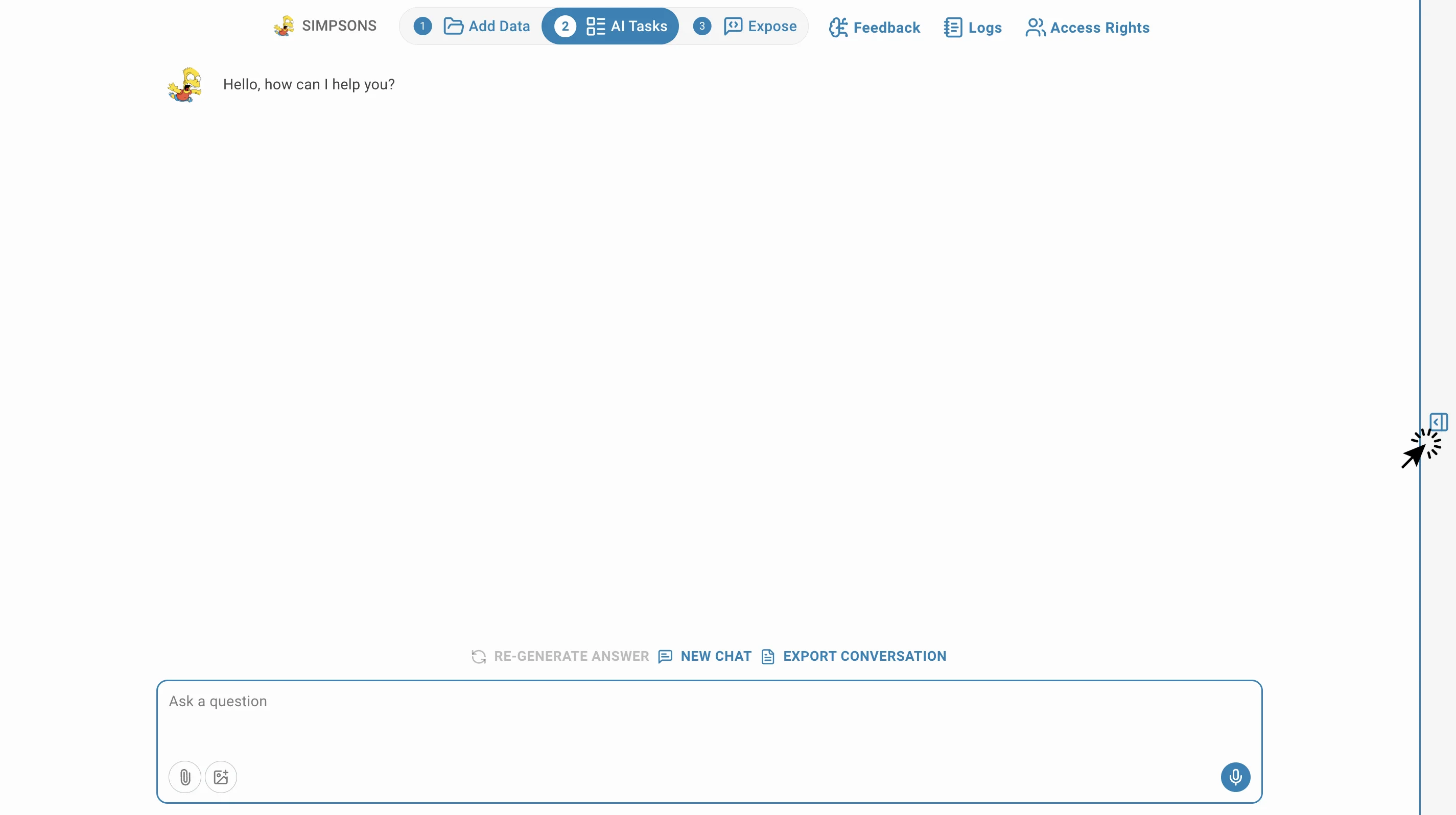

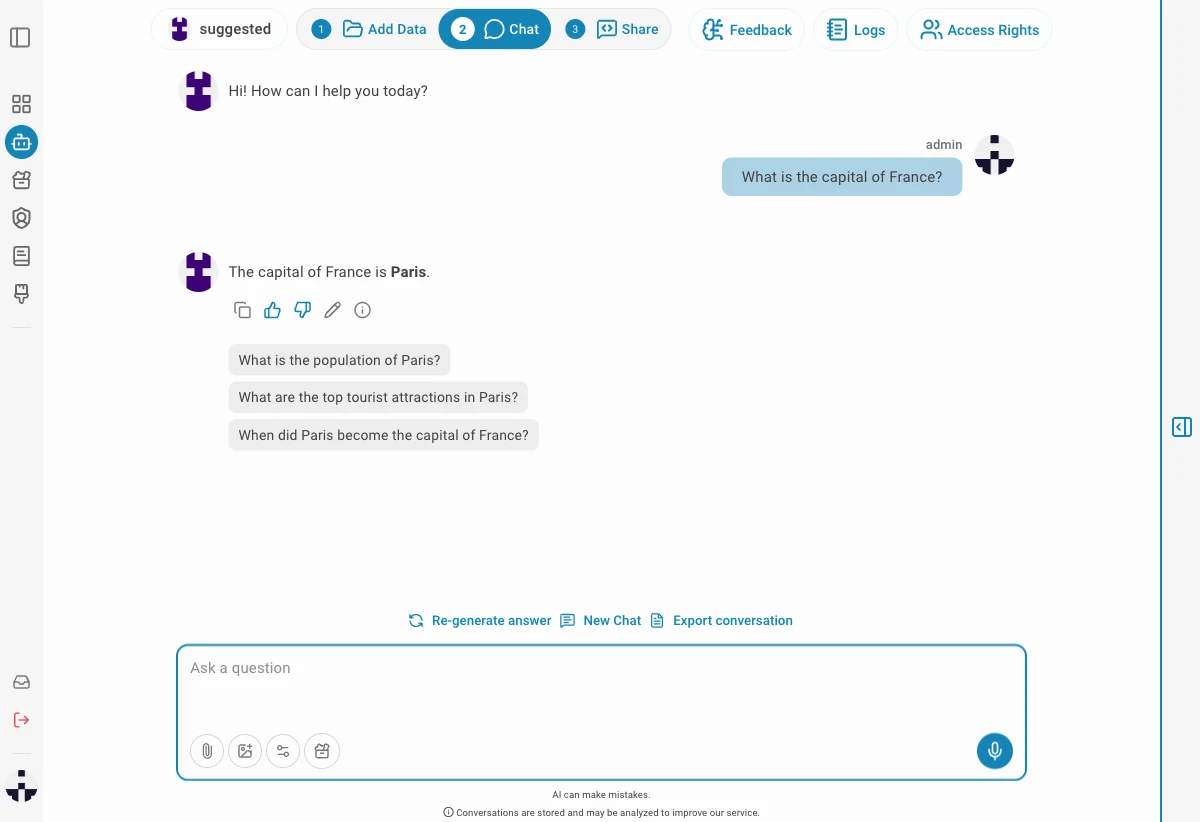

Like a conversation, Chat section allows you to interact with the AI Assistant you just built!

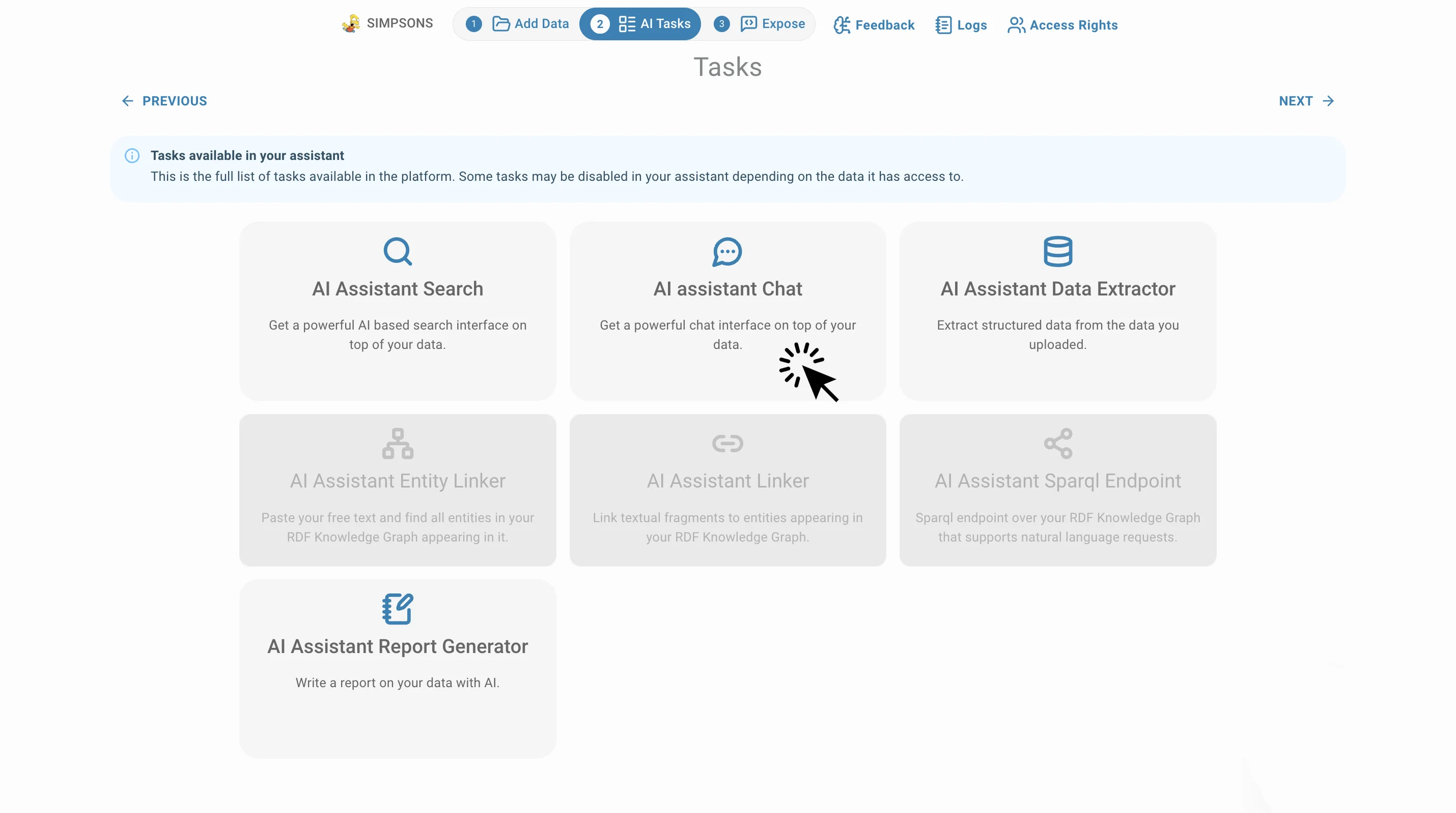

To access this AI task click on AI Tasks and Chat:

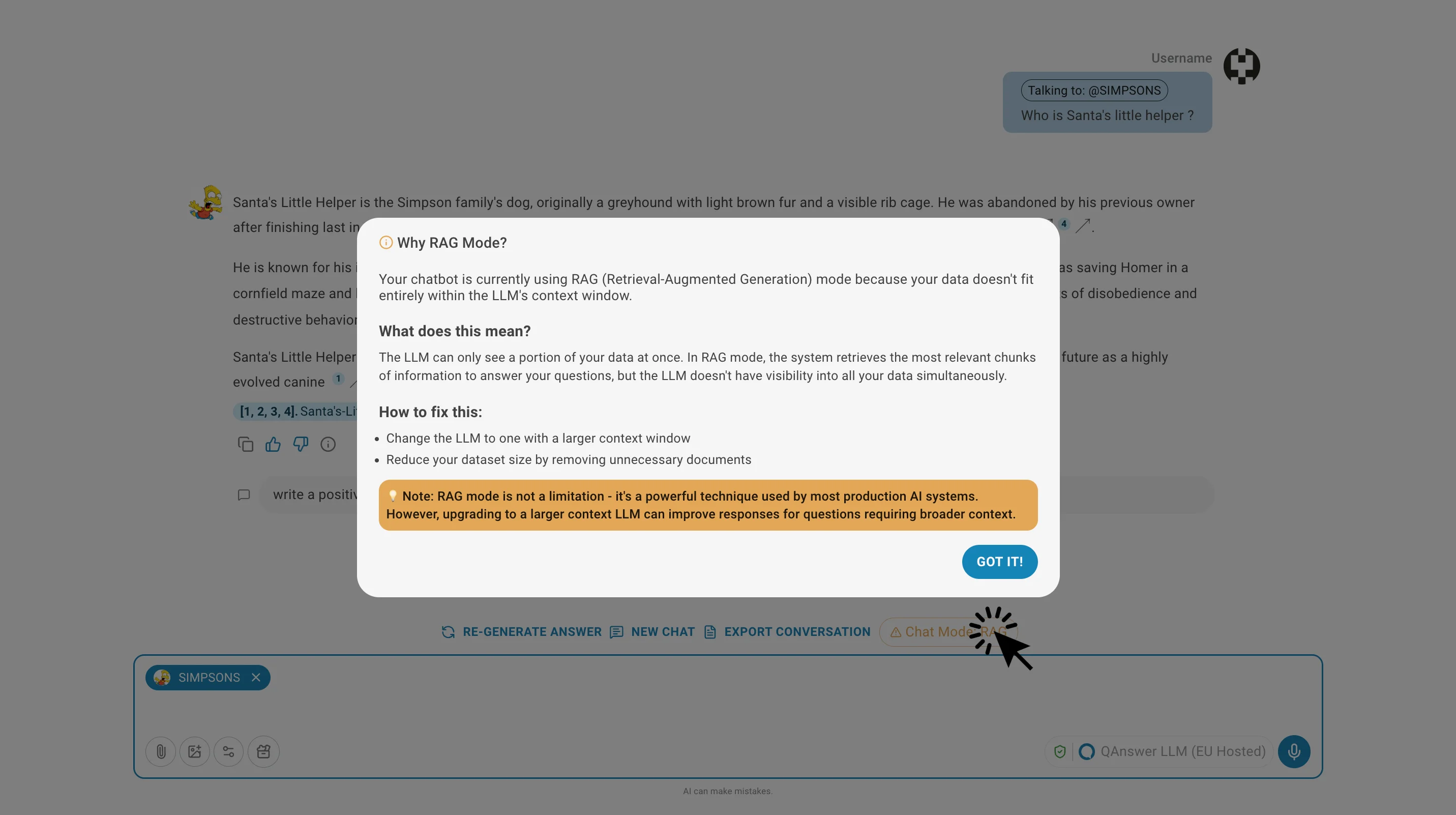

The response of AI assistant is a synthesized answer based on all the documents QAnswer has found for last chat message you sent. The answer is based on a generative model, therefore each generated answer could be in different wording but contain always the same information.

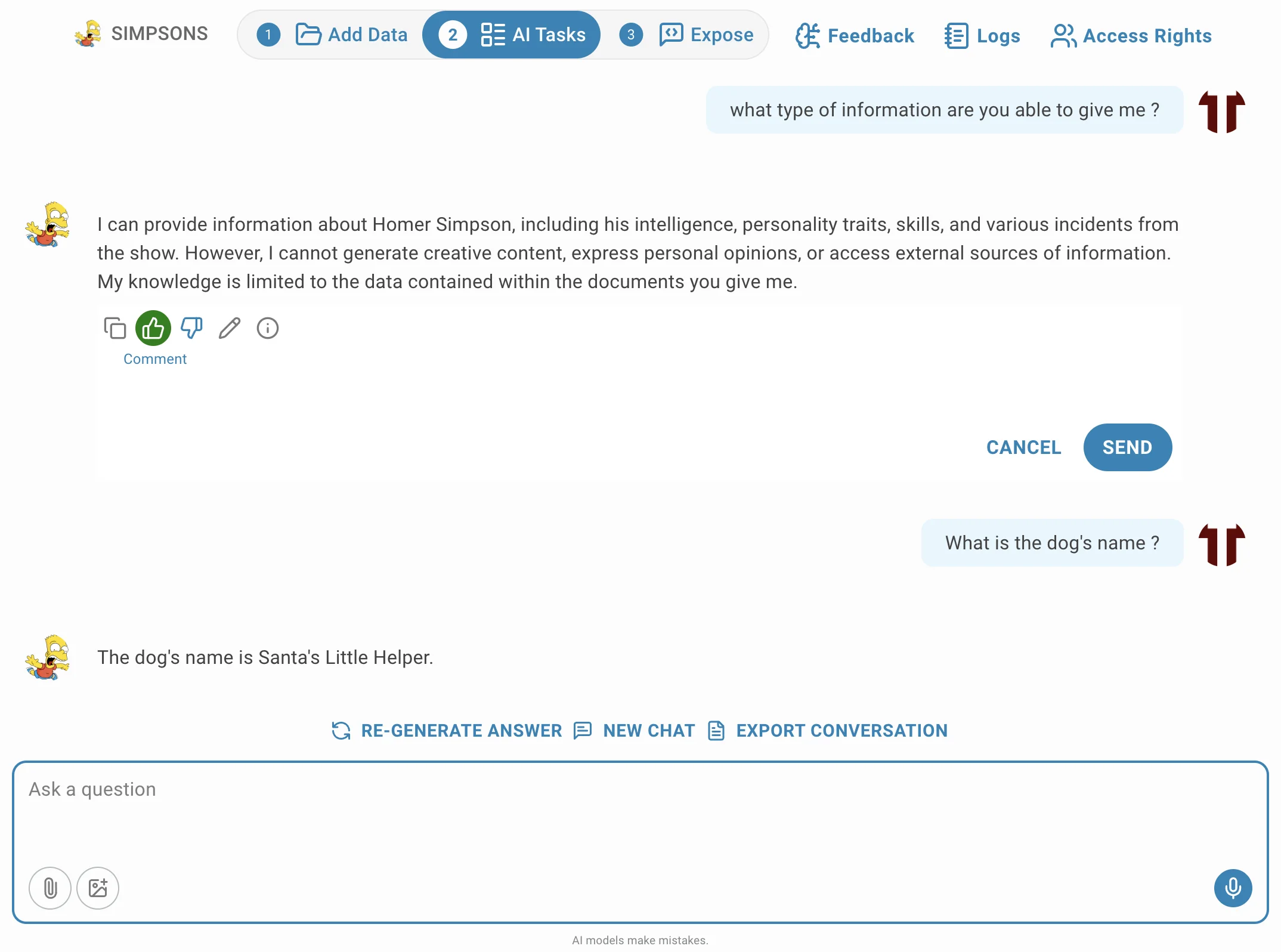

To further improve the performance of the responses, you can give feedback by clicking on the thumbs up or thumbs down icons.

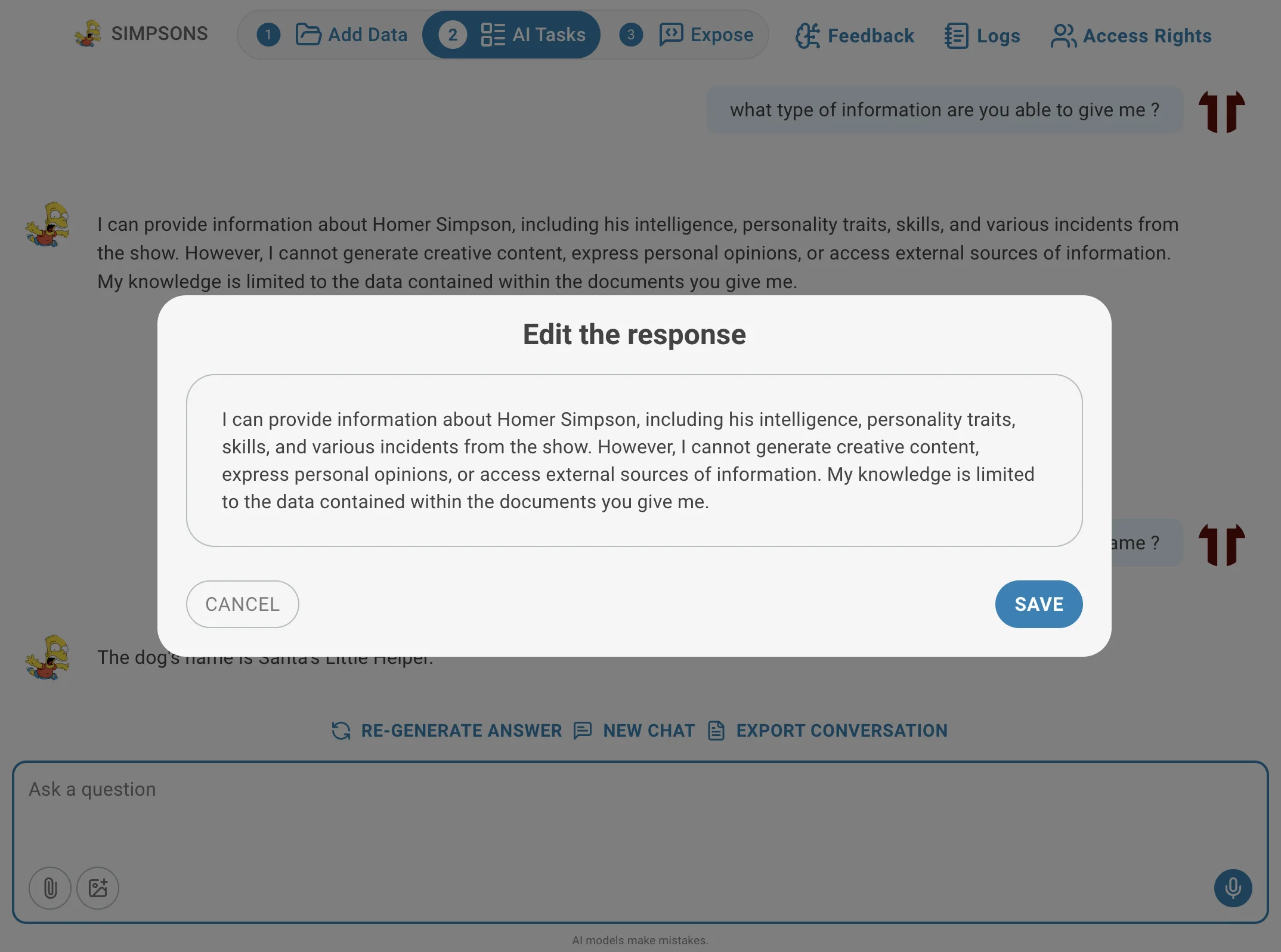

Additionally, you can also edit the generated answer by clicking on the edit icon.

Task Settings

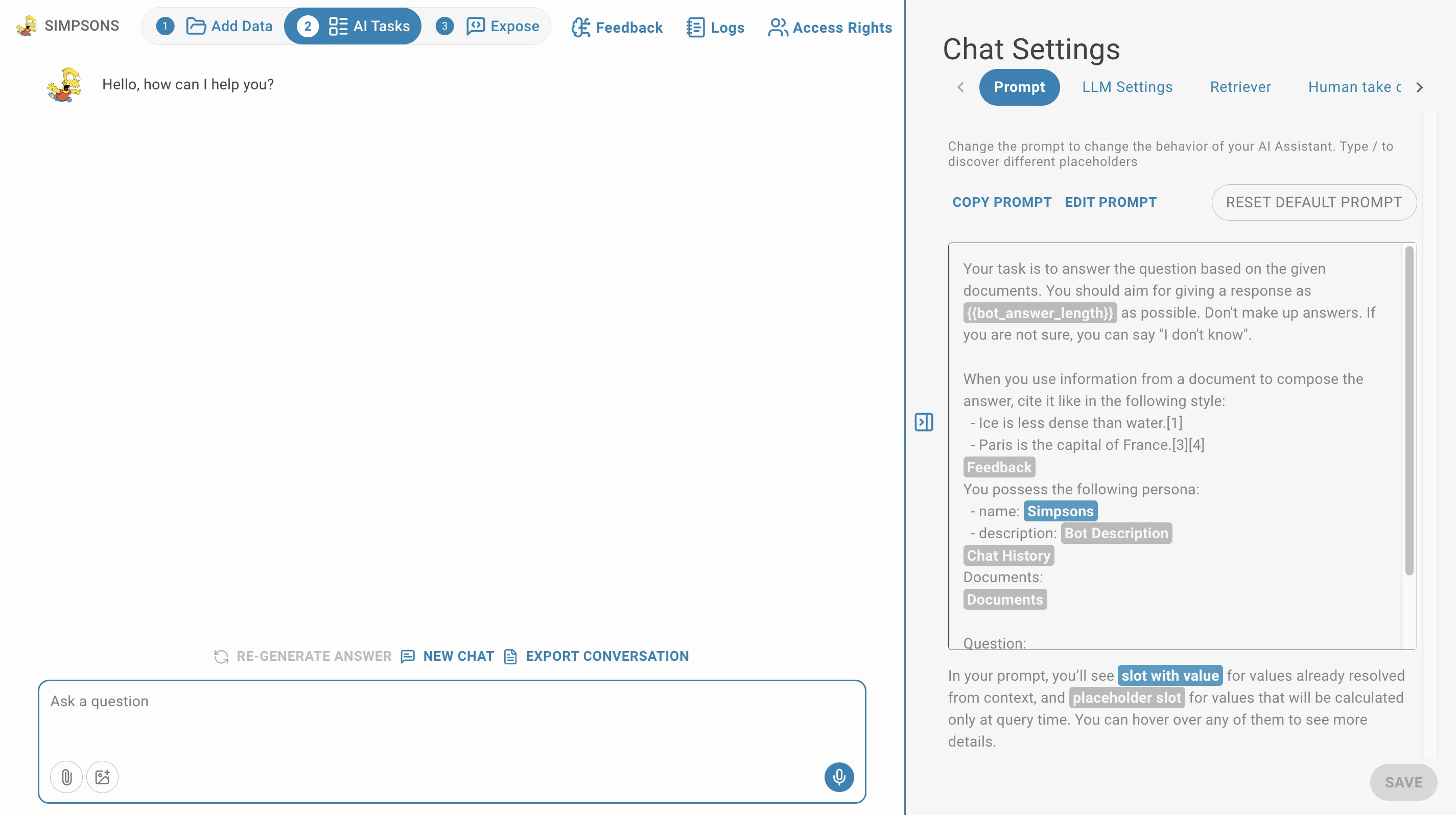

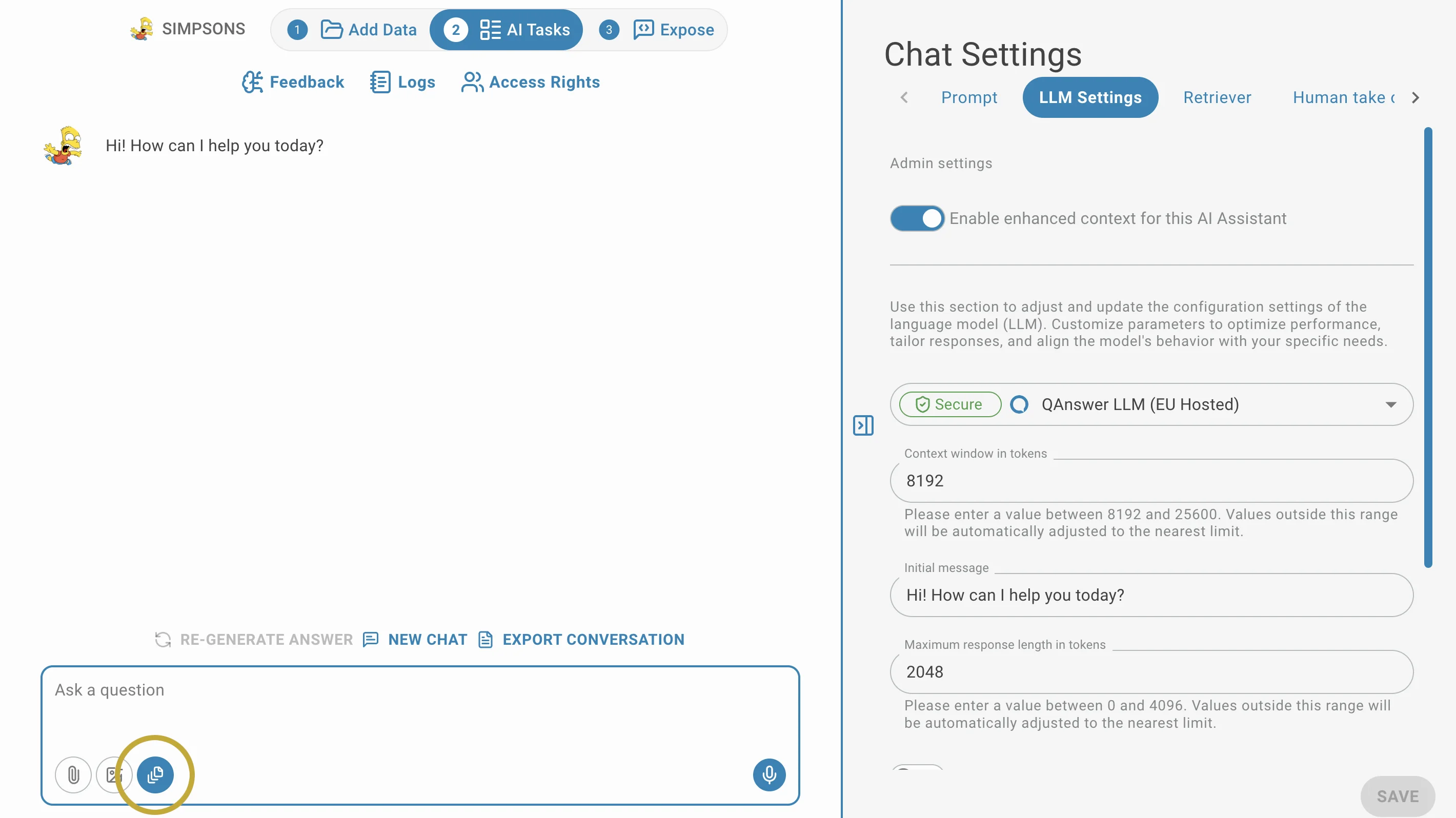

If you want to customize your Chat Ai Assistant you can click on the settings panel icon. You can customize the way your Ai assistant answers you by:

- Changing the prompt in the prompt editor: Adapt how the AI assistant will behave.

- Adjusting the LLM settings: you can choose the LLM that will power the answers, the initial message, the answer length, its creativity level, the answer speed.

- Adjusting the Retriever: you can choose the LLM reference level, add synonyms.

- Enabling Human Takeover: allow users to request a human to take over the chat if needed.

- Configuring Advanced Filters: allow users to filter the documents used to answer questions based on specific metadata.

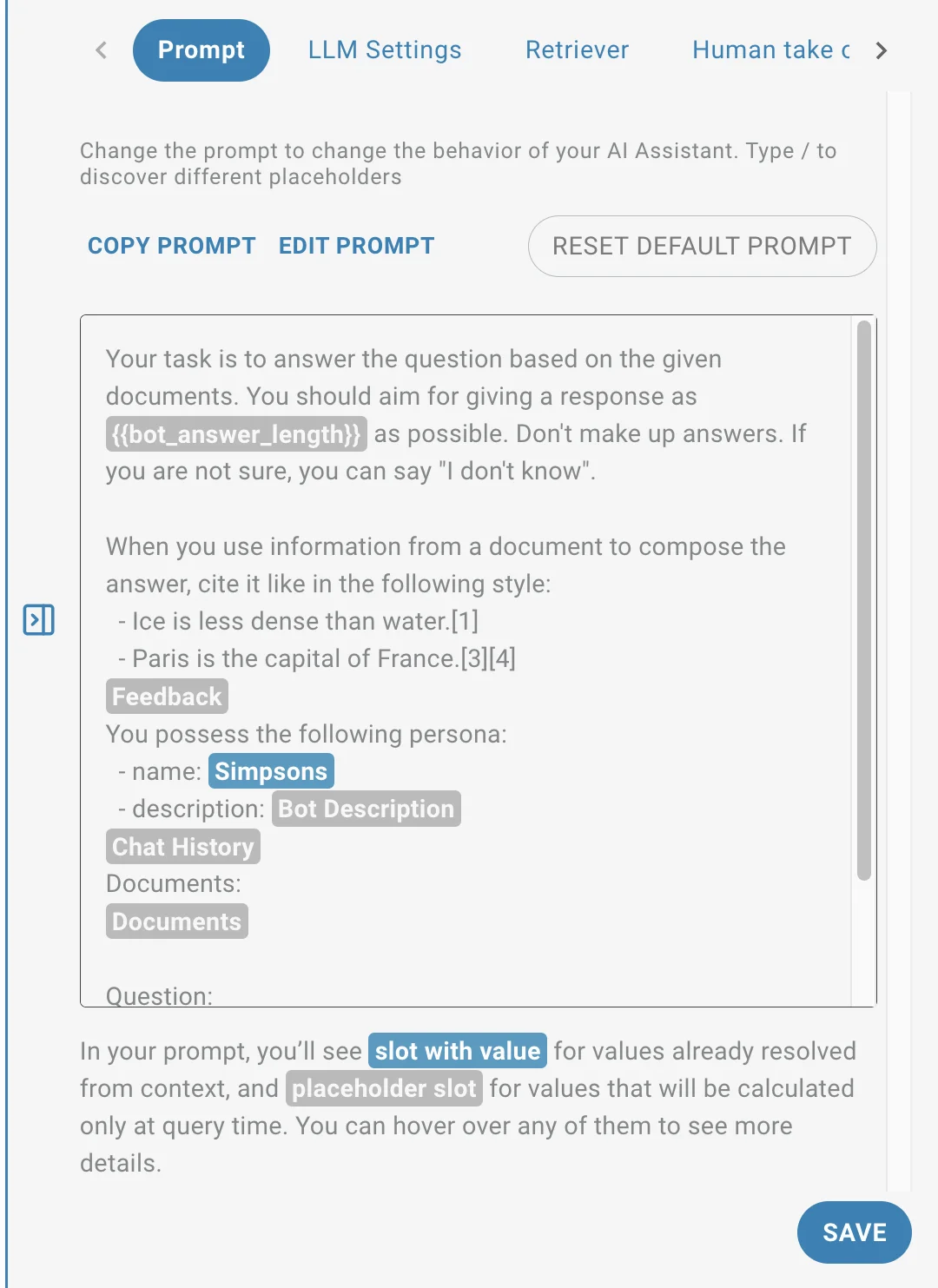

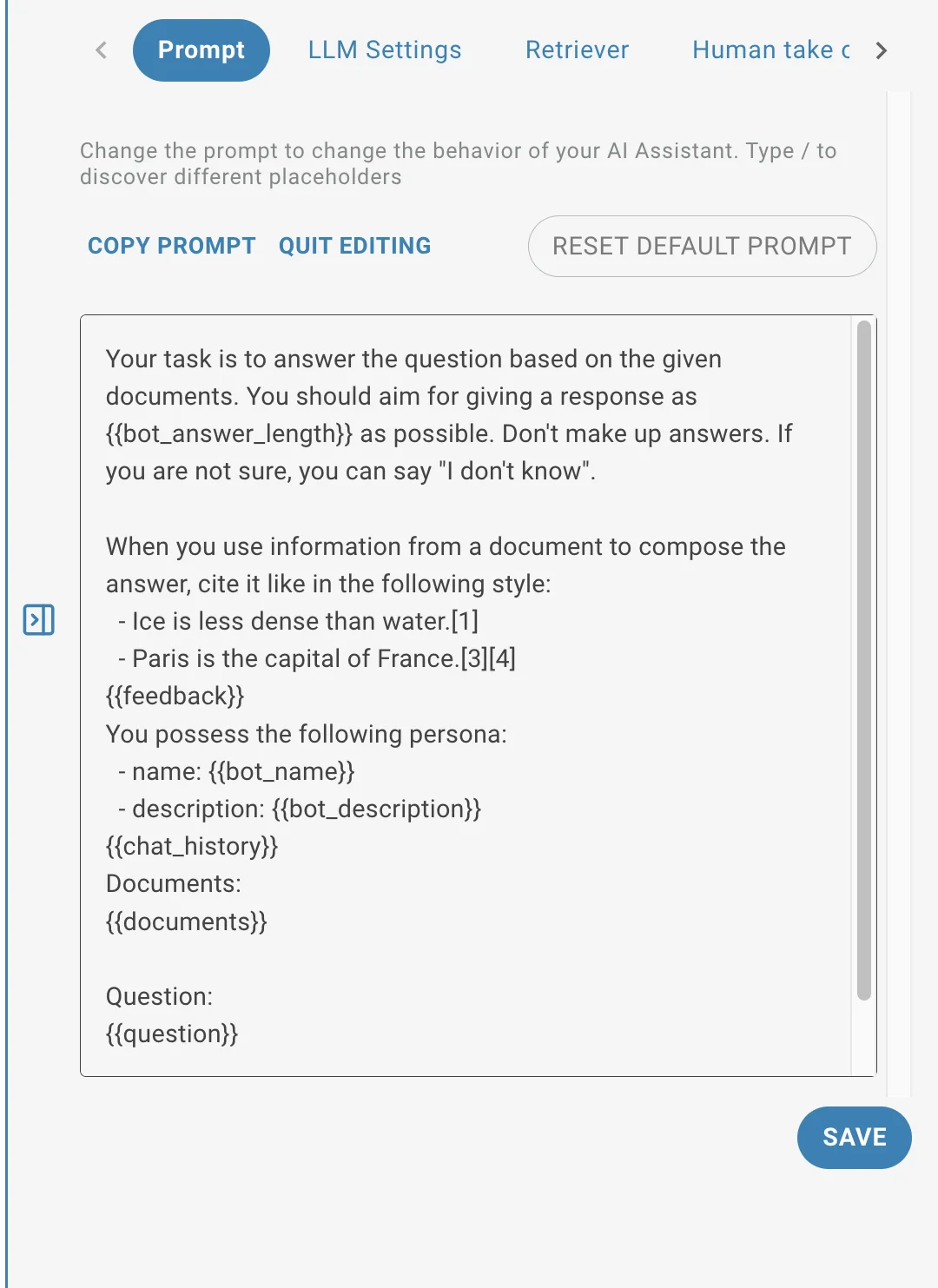

Prompt Editor

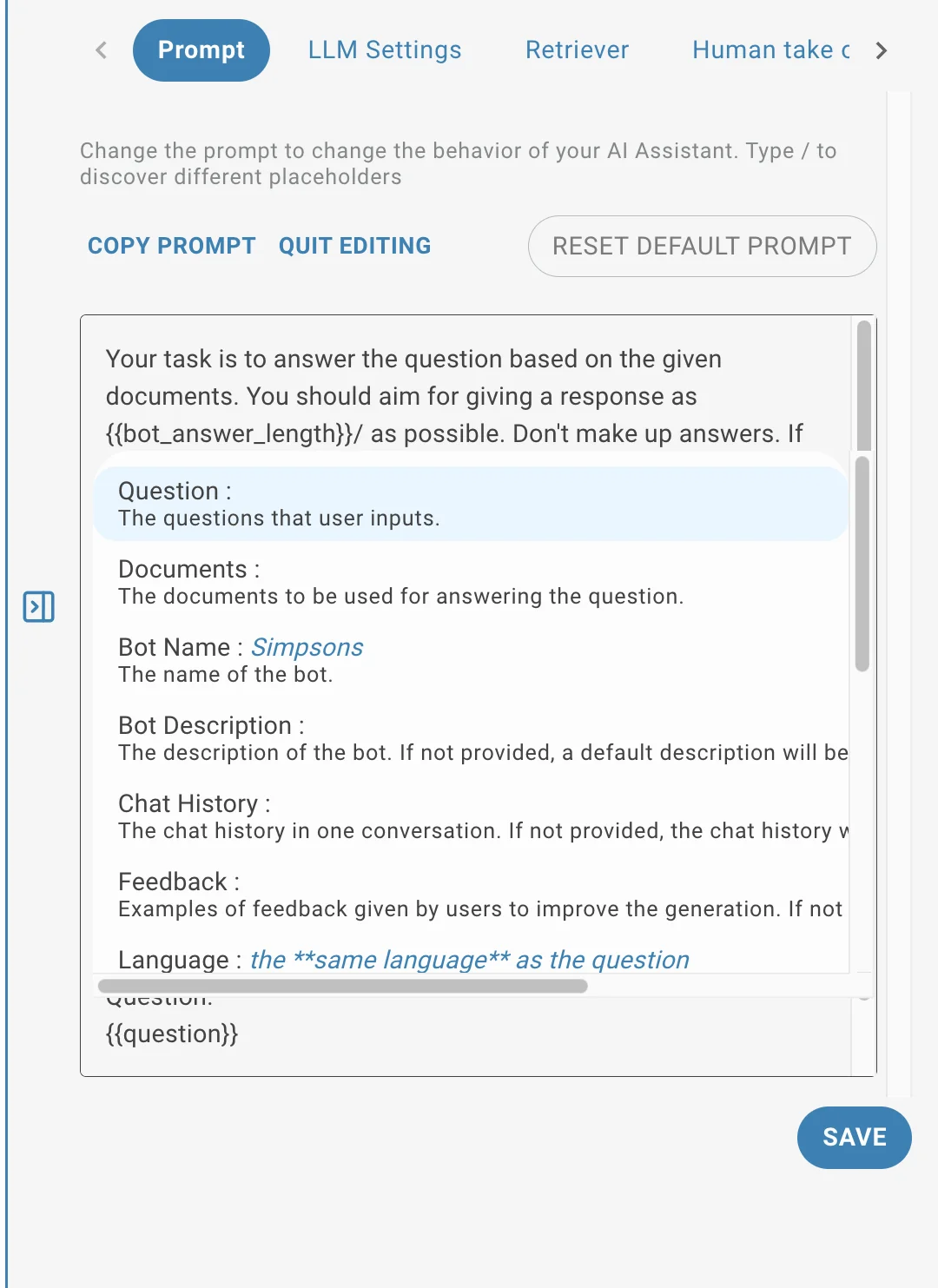

The prompt editor allows you to fully customize the behavior of your AI assistant. You can define the personality of your AI assistant, how it should respond to queries, what style it should use and many more. By using slots variables like {{bot_name}} or {{bot_answer_length}} you can inject dynamic values into the prompt.

Press the / and you'll get the available variable list. Refer to the slots section for more information on how to use them.

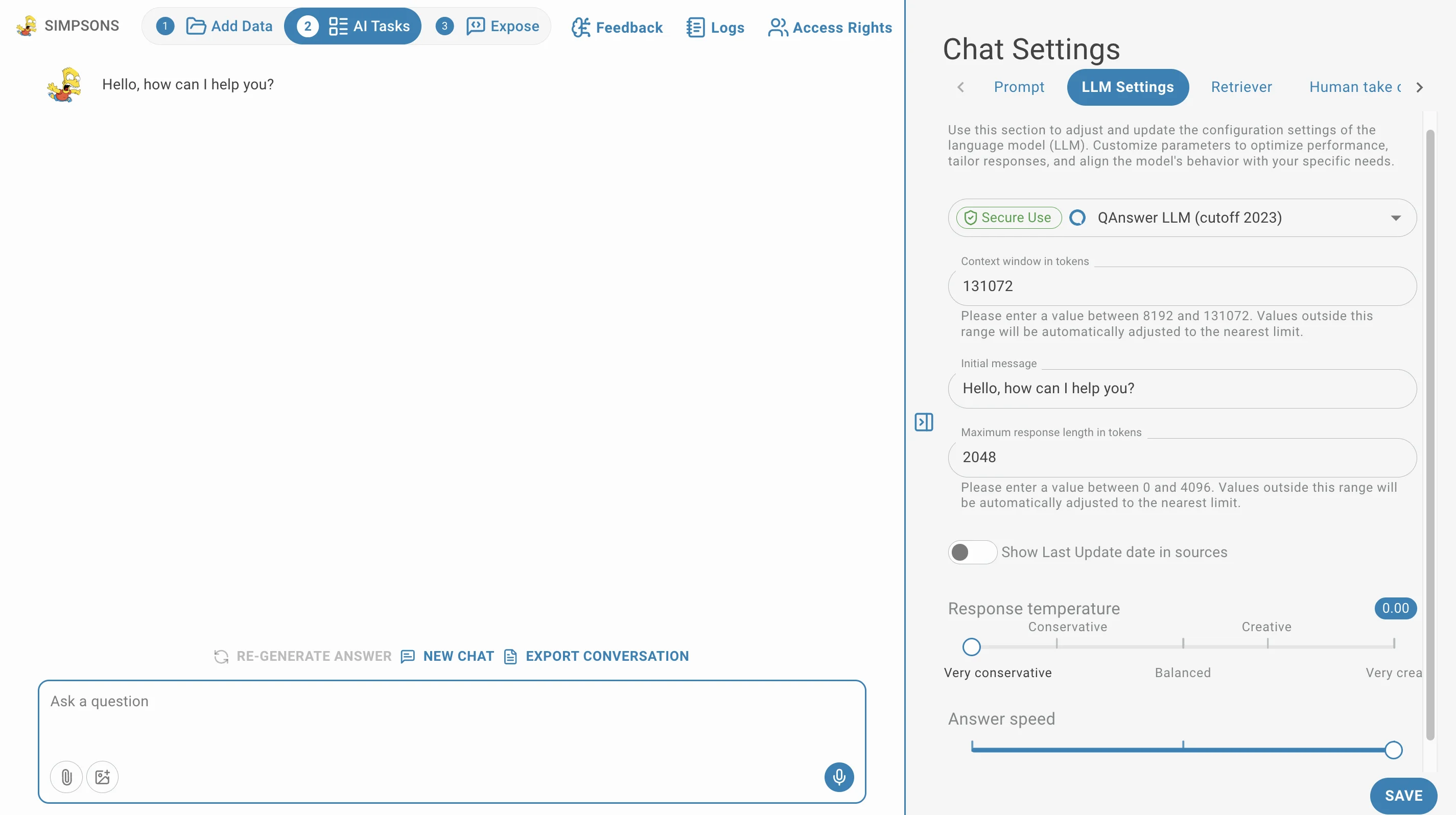

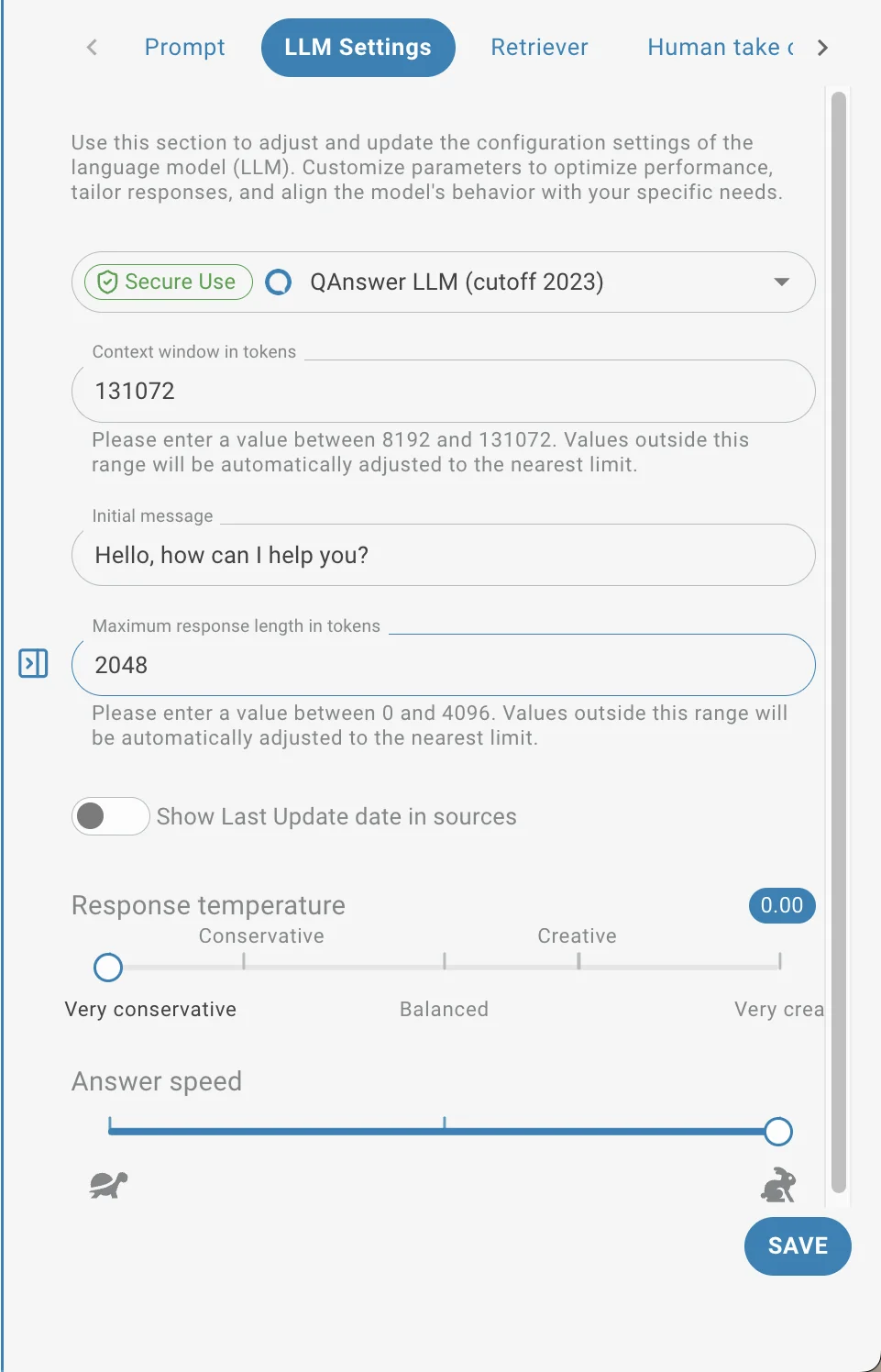

LLM Settings

In the LLM settings, you can adjust the parameters of the LLM that will power your AI assistant like:

- the LLM model unstructured

- the initial message

- the context window in tokens

- to show or hide last update date in sources

- the maximum answer length

- the creativity level (temperature)

- the answer speed

- Context window (tokens): how much text (conversation + documents) the model can consider at once. If your input exceeds this, earlier content may be dropped.

- Maximum response length: cap on tokens the model returns; prevents overly long outputs.

Practical tip: use low temperature for factual extraction, increase context window to include long documents, and set a max response length to control output size and cost.

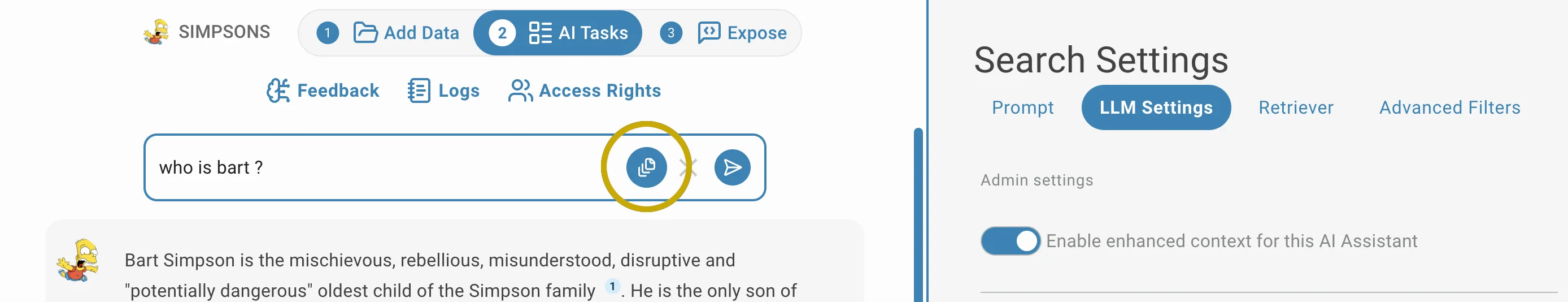

Enhance Context

This feature can be accessed by 2 settings:

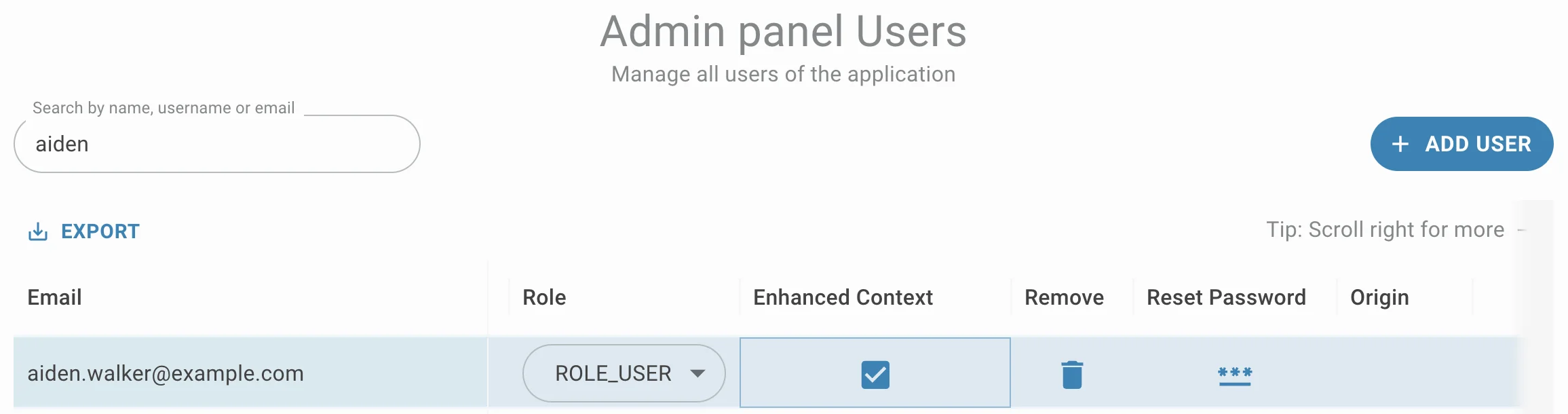

- Per-user access (controlled by admins via user table)

- Per-assistant access (in the settings panel)

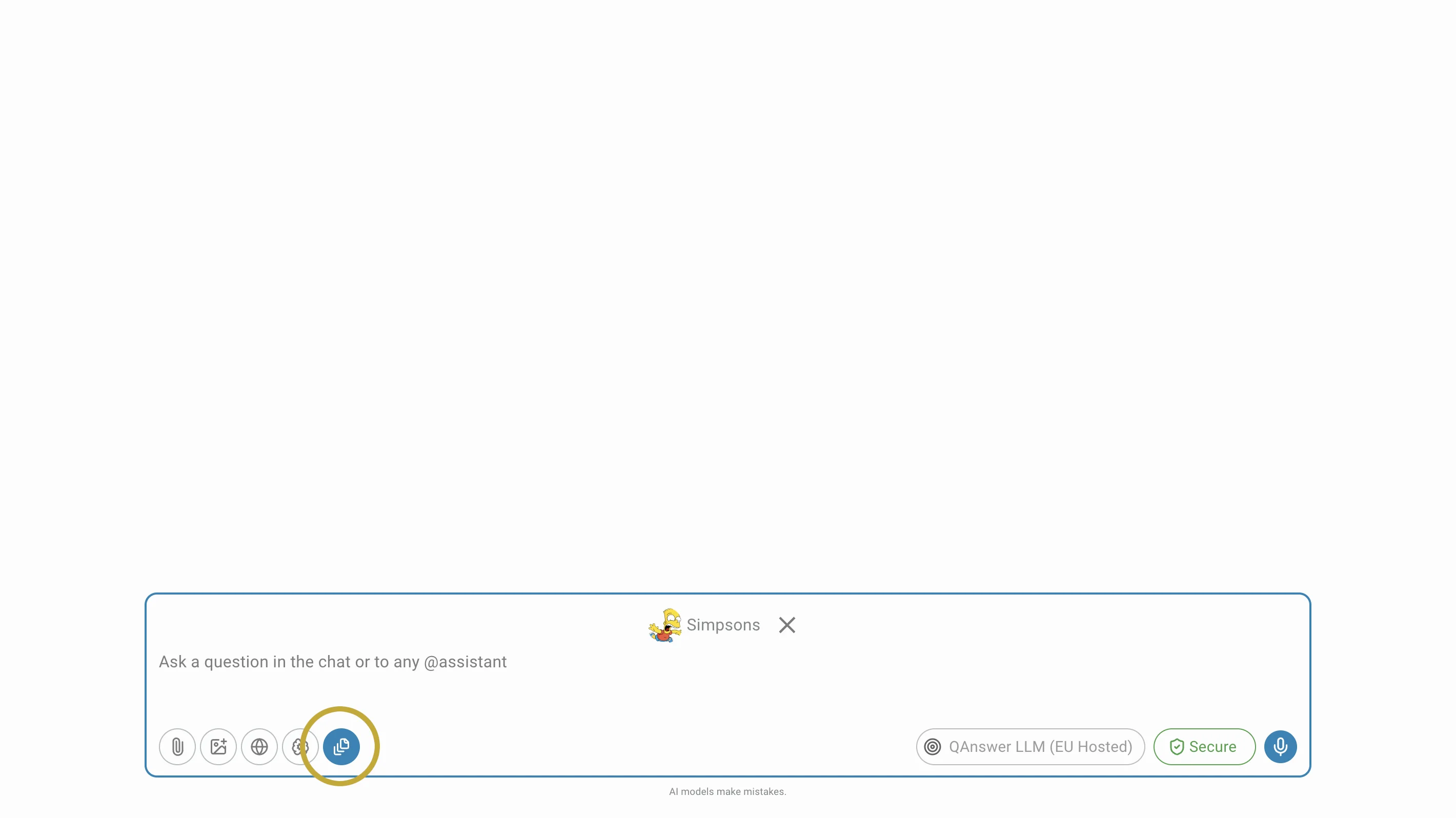

If checked in Admin Panel settings the toggle will be visible in the Search and Chat AI Task interfaces and for the chatbot input box when the AI assistant is tagged.

The feature is inactive by default. If the toggle is set to active, the AI assistant will be able to process large documents more effectively. If your document is too big for the AI to read all at once, it will automatically break the document into smaller parts, understand each part, and then combine the answers. This helps the AI consider the whole document when answering your question, even if the file is large.

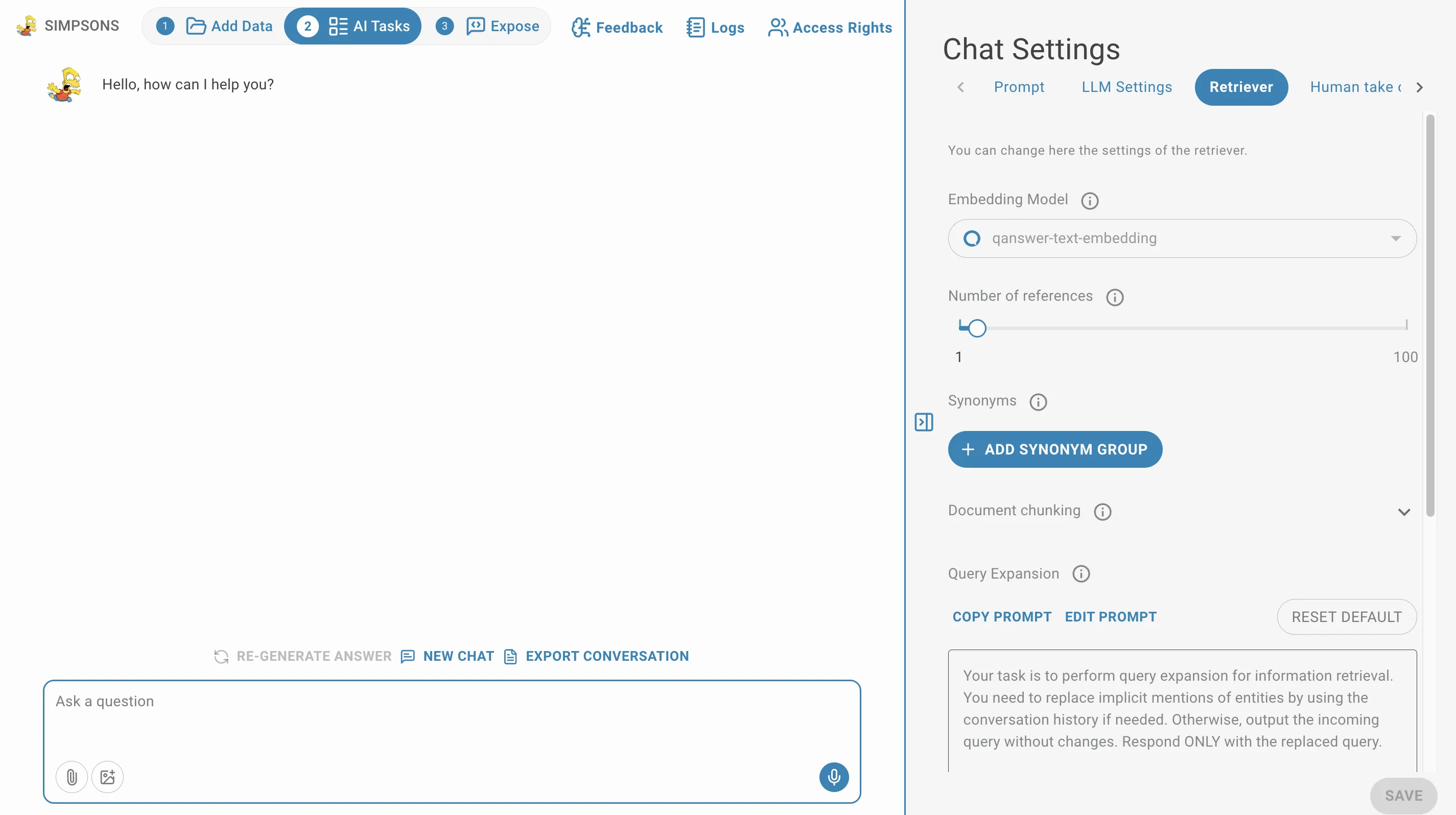

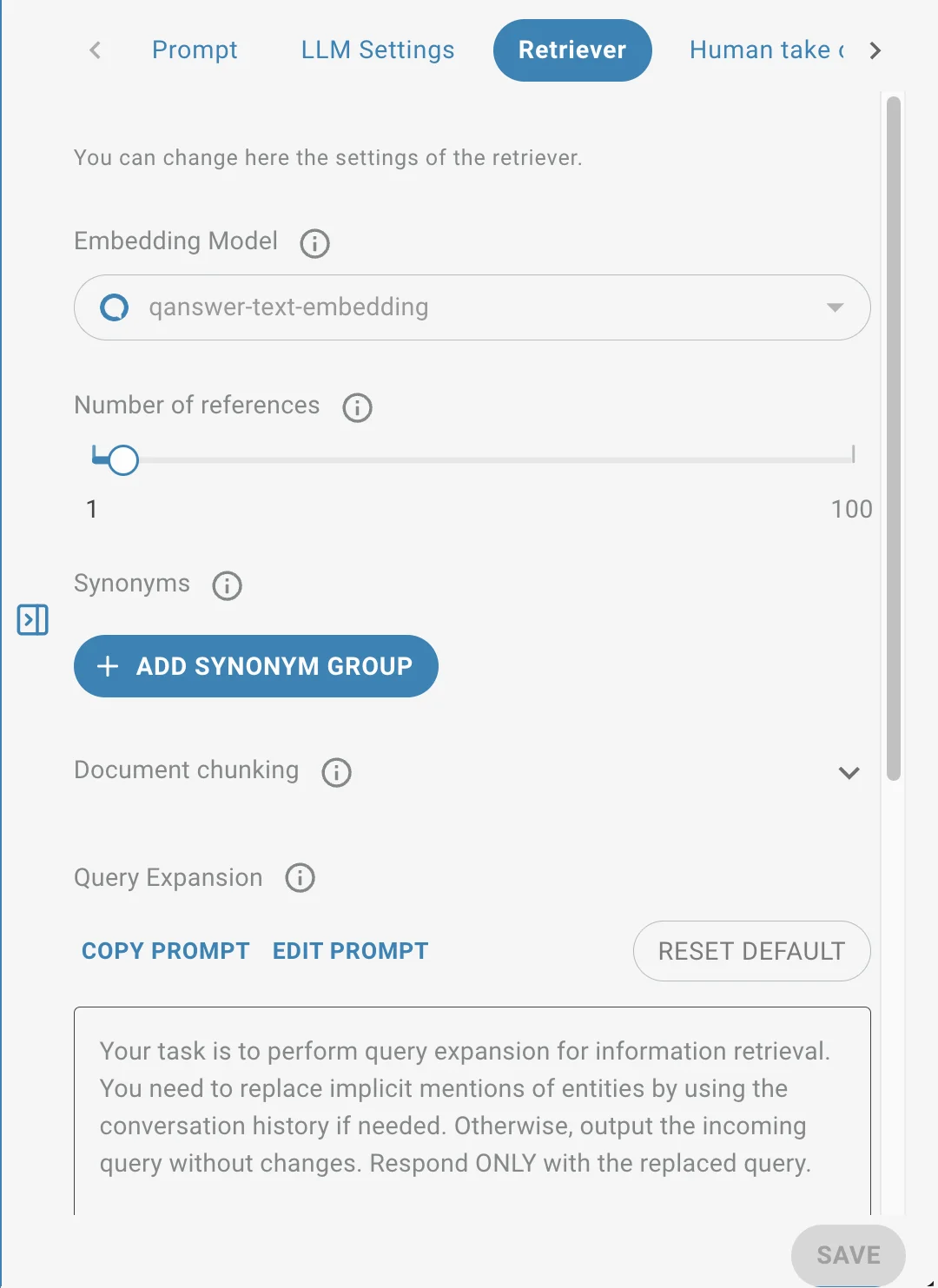

Retriever Settings

In the retriever settings, you can adjust the parameters of the retriever that will be used to retrieve relevant documents for your AI assistant. It contains:

- the embedding model used (note this will reindex completely your AI assistant if you change it)

- The number of references passed to the LLM

- the synonyms used to retrieve more relevant documents

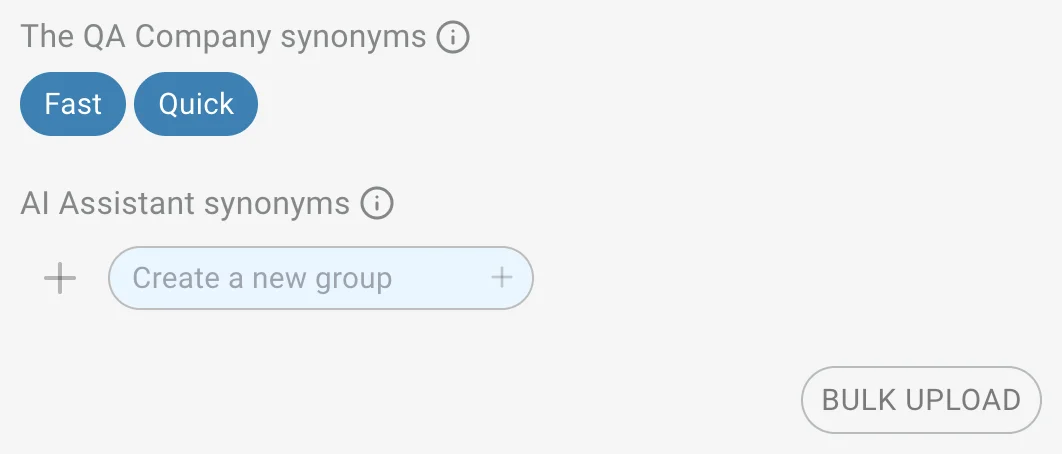

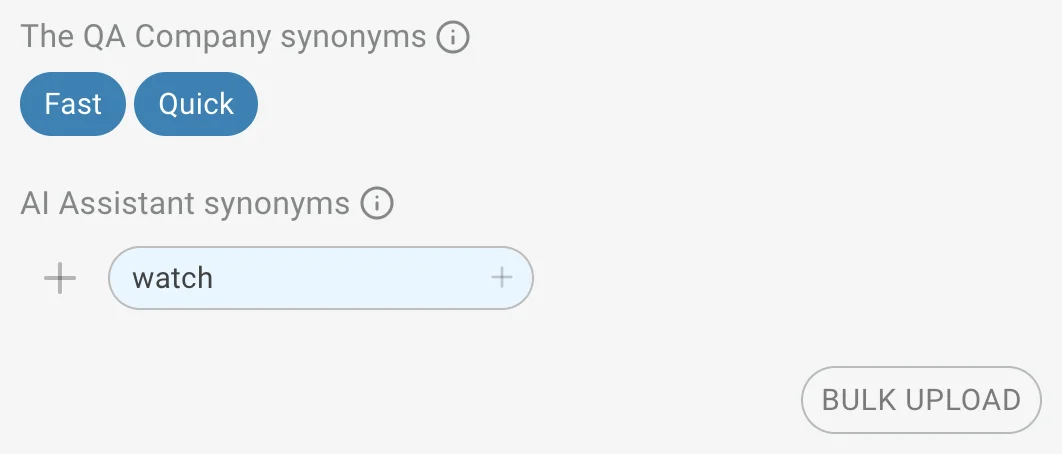

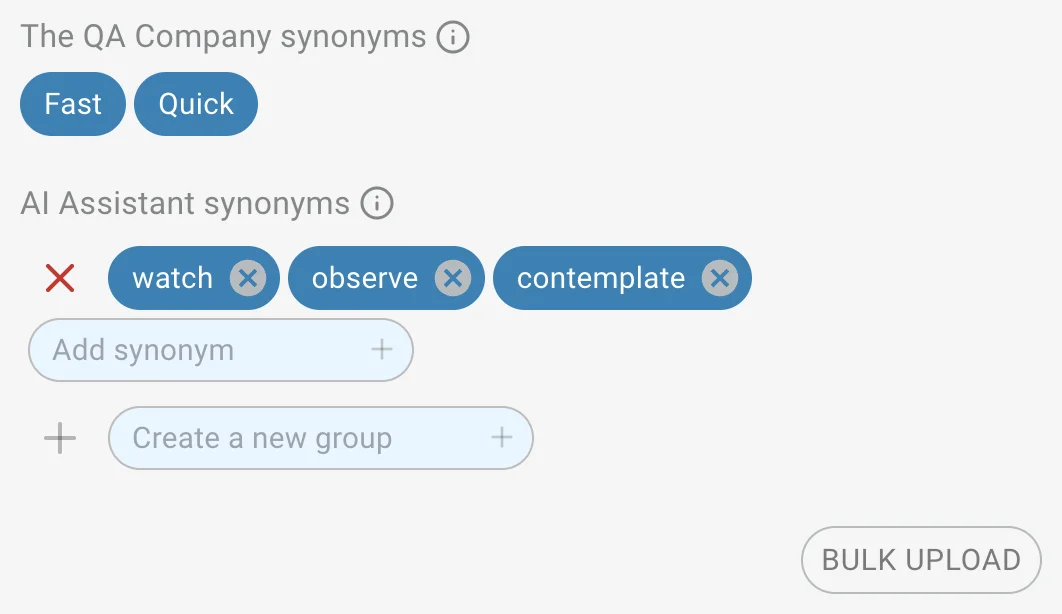

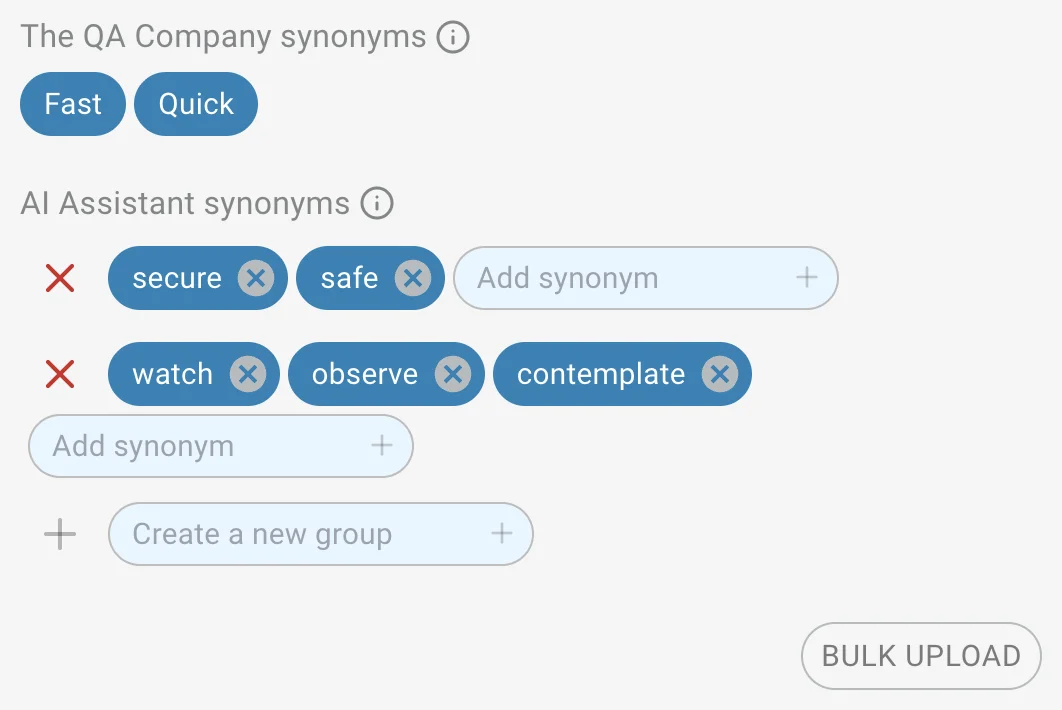

Synonyms

In the section dedicated to synonyms you can define groups of synonyms that will be used to retrieve more relevant documents.

- Click on + Add synonym group to add a new group of synonyms.

- Enter a word in the input field and press Enter or click the + button to add it to the list of synonyms.

- You can only add a new group if there are no existing groups, or if the last one has at least one synonym and the input field is empty.

- You can delete a group by clicking the trash icon.

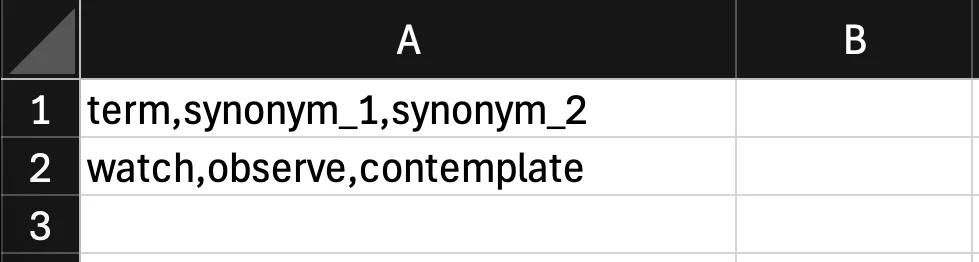

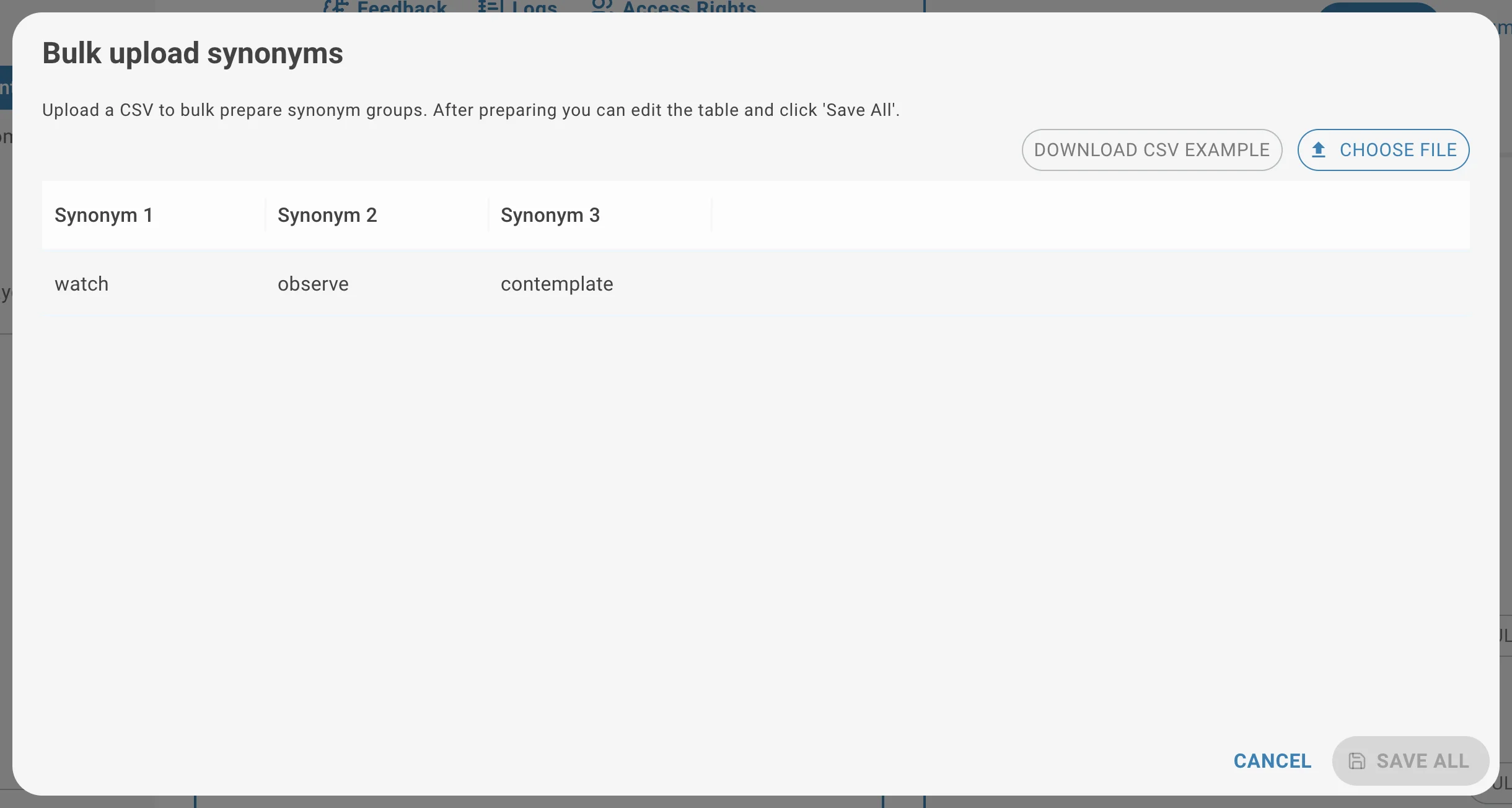

- You can upload a list of synonyms by clicking on Bulk Upload button. You'll be able to download the CSV example to learn the format or Choose File to upload your own CSV.

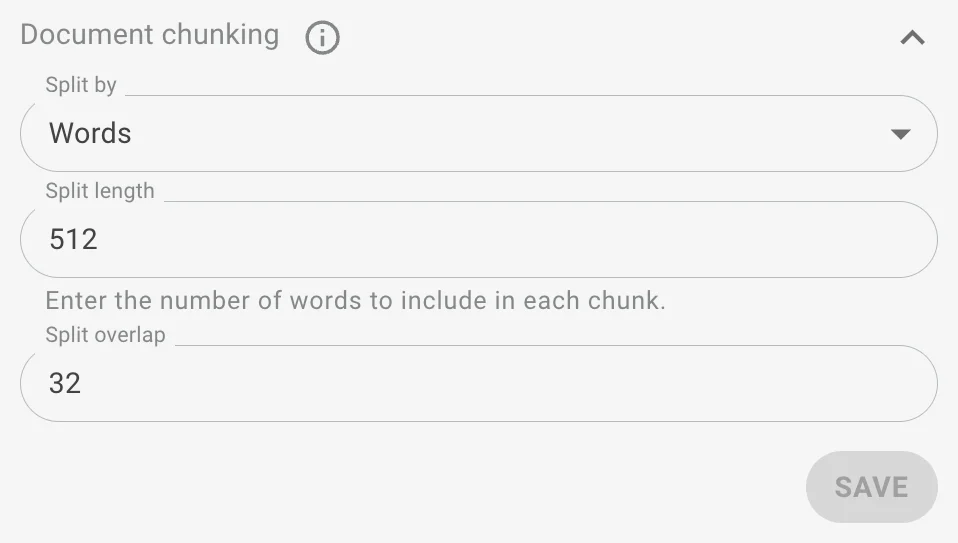

Document Chunking

Document chunking controls how text is split before being embedded and indexed. It impacts retrieval quality and context windows. You have three main parameters:

Split by — Choose the unit used to cut the document: Words, Sentences, or Pages.

Split Length — Defines how big each chunk is, based on the selected unit.

Split Overlap — Defines how much of the previous chunk is carried into the next chunk to preserve context.

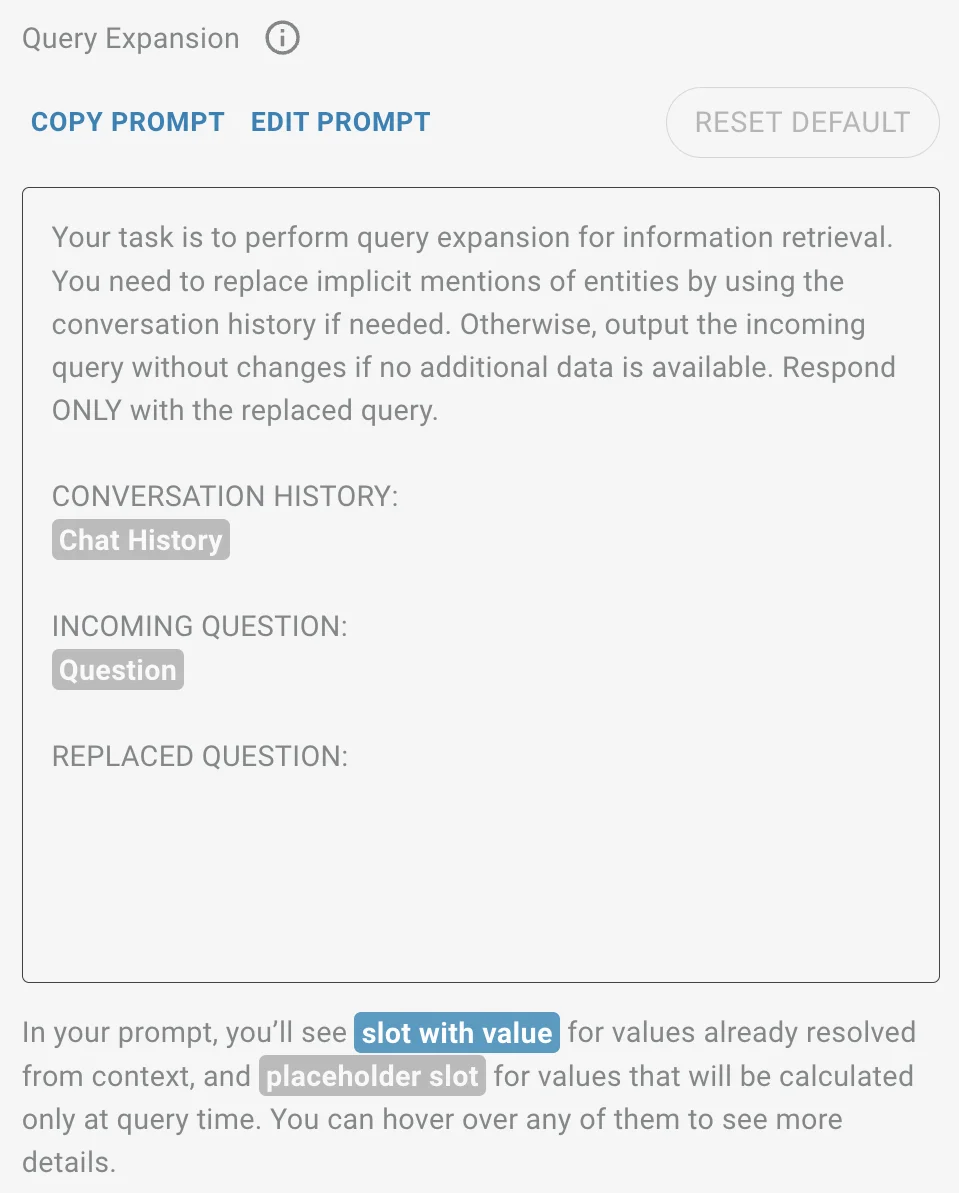

Query Expansion

Query expansion is used to reformulate user queries so that they include the necessary context for retrieving the correct documents. This is especially important when users ask follow-up questions or when queries involve time references.

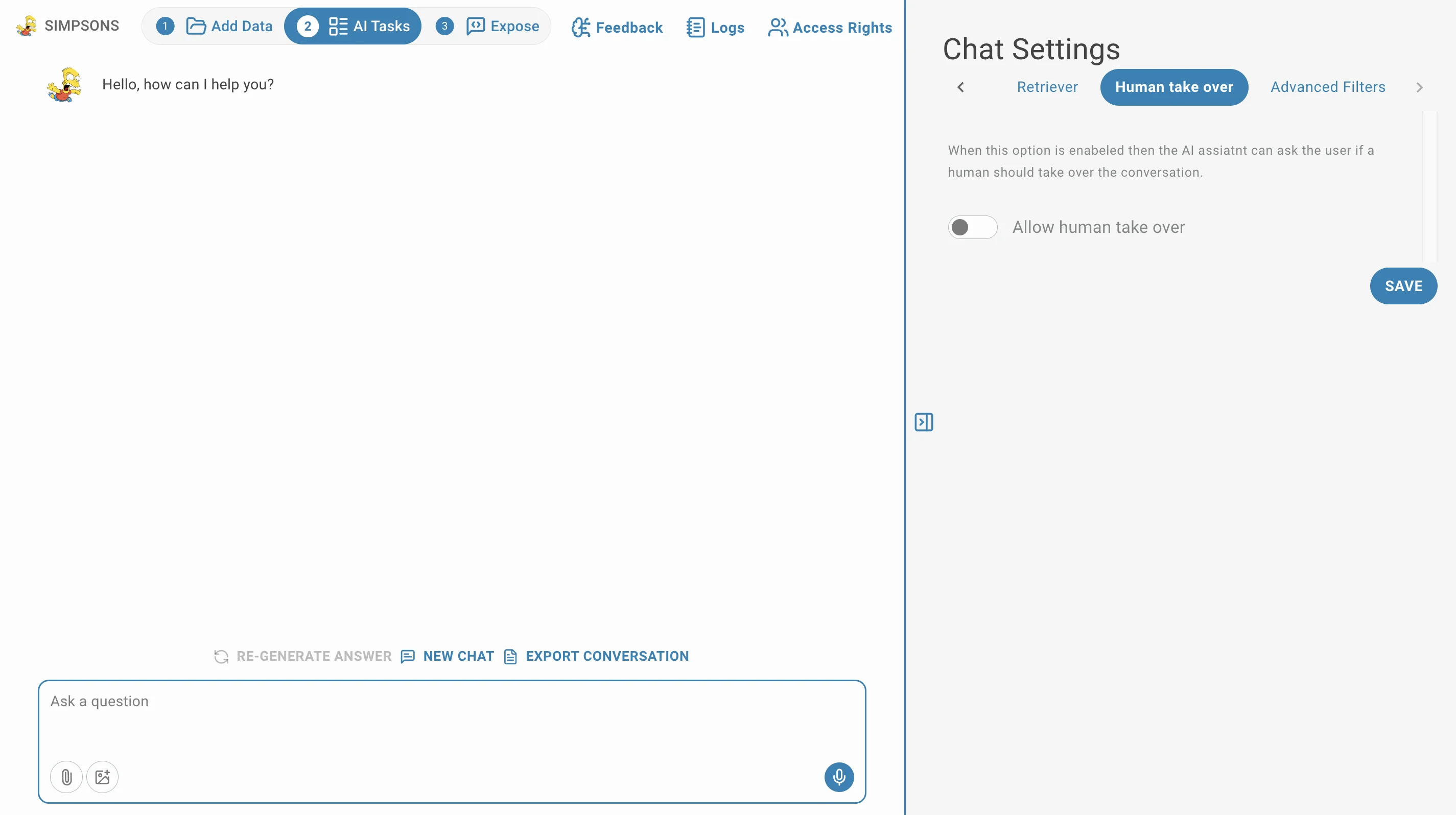

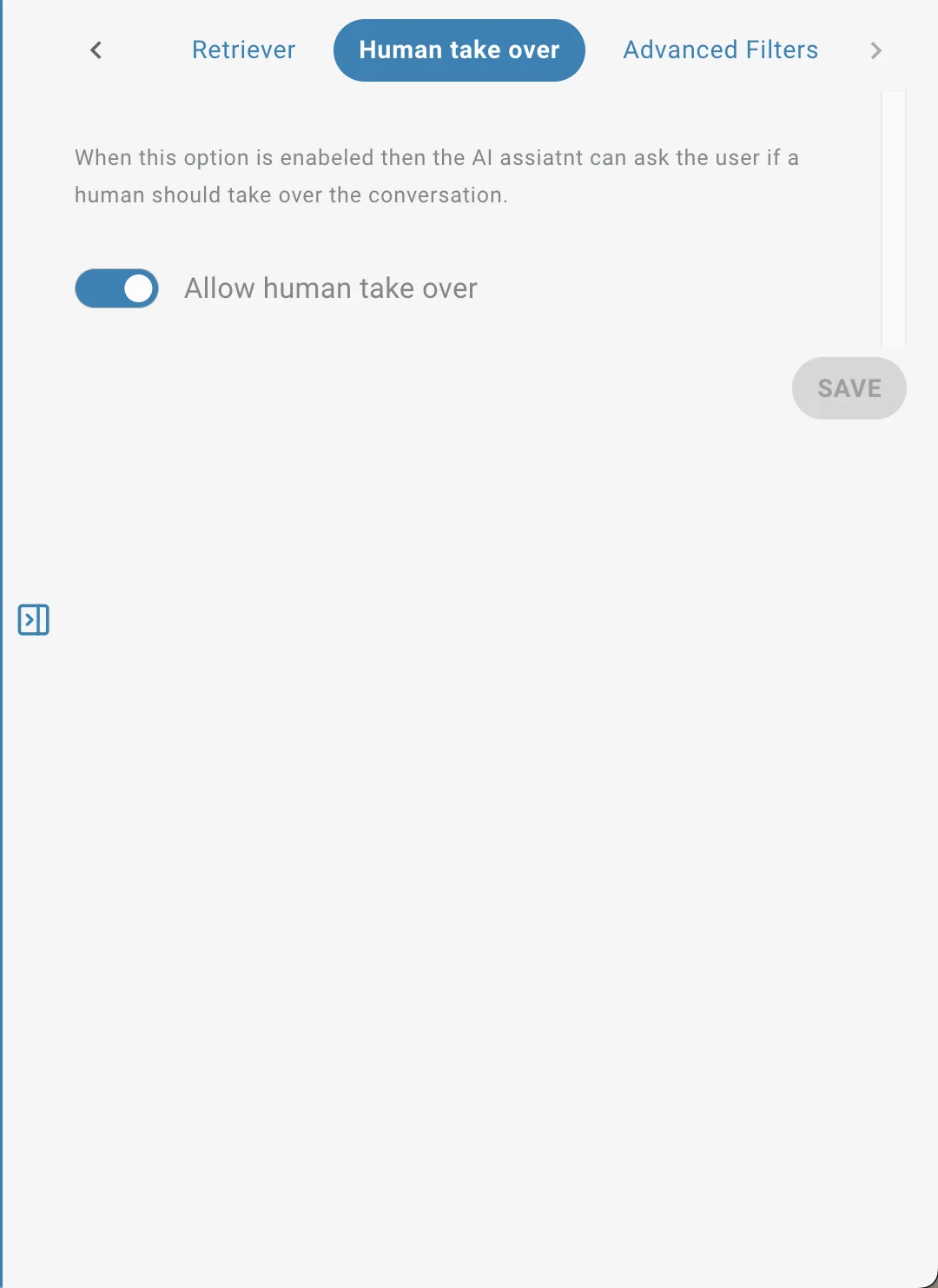

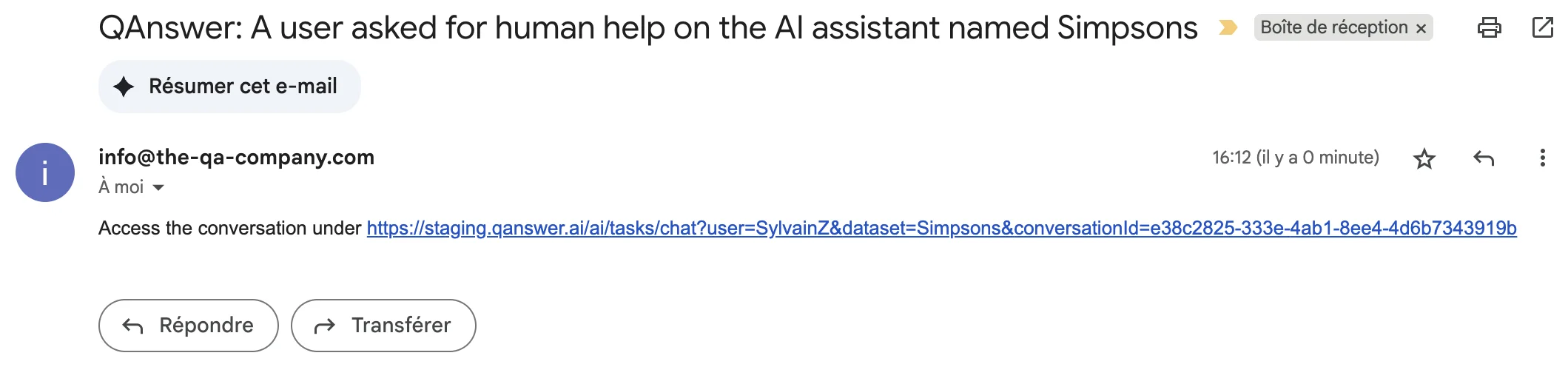

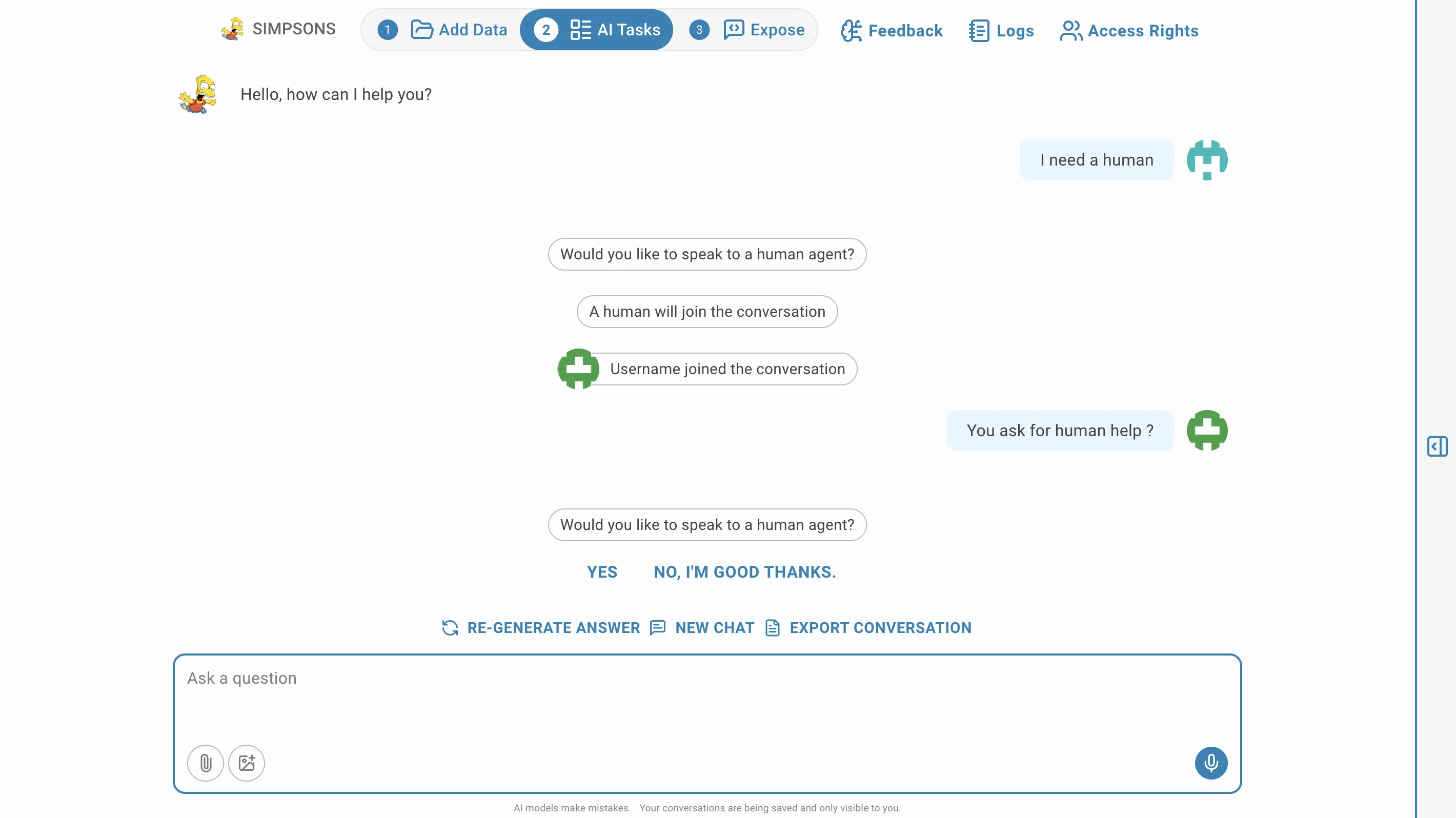

Human Takeover

- When this option is enabled, a user of the AI assistant can request a human to take over the chat. It works only if the AI assistant is shared with other users.

- An email is sent to the owner of the AI assistant informing them of the request.

- The owner can access the conversation and take over if needed.

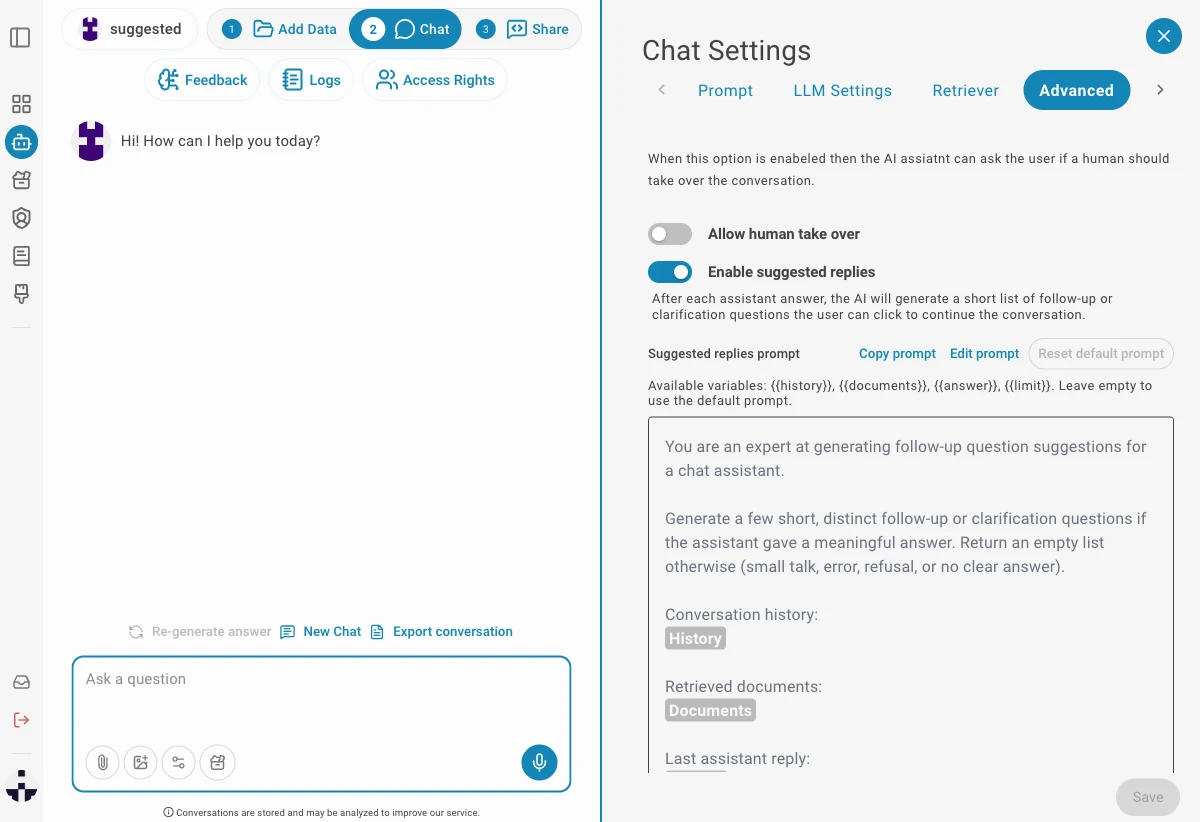

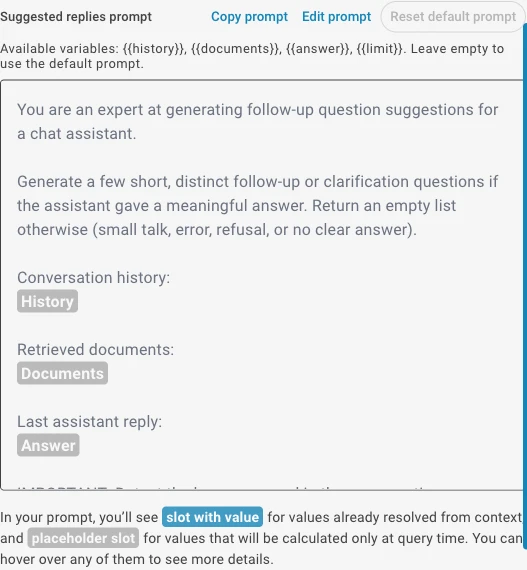

Suggested Replies

Suggested Replies automatically generates clickable follow-up question bubbles below each assistant response. Clicking a bubble sends that question to the assistant instantly, helping users explore topics without having to type.

- Enable the toggle in the Advanced settings tab to activate Suggested Replies.

- After each answer a set of relevant follow-up questions appears as clickable bubbles below the response.

- The prompt used to generate suggestions is fully customizable — you can change the language, tone, or focus of the suggestions.

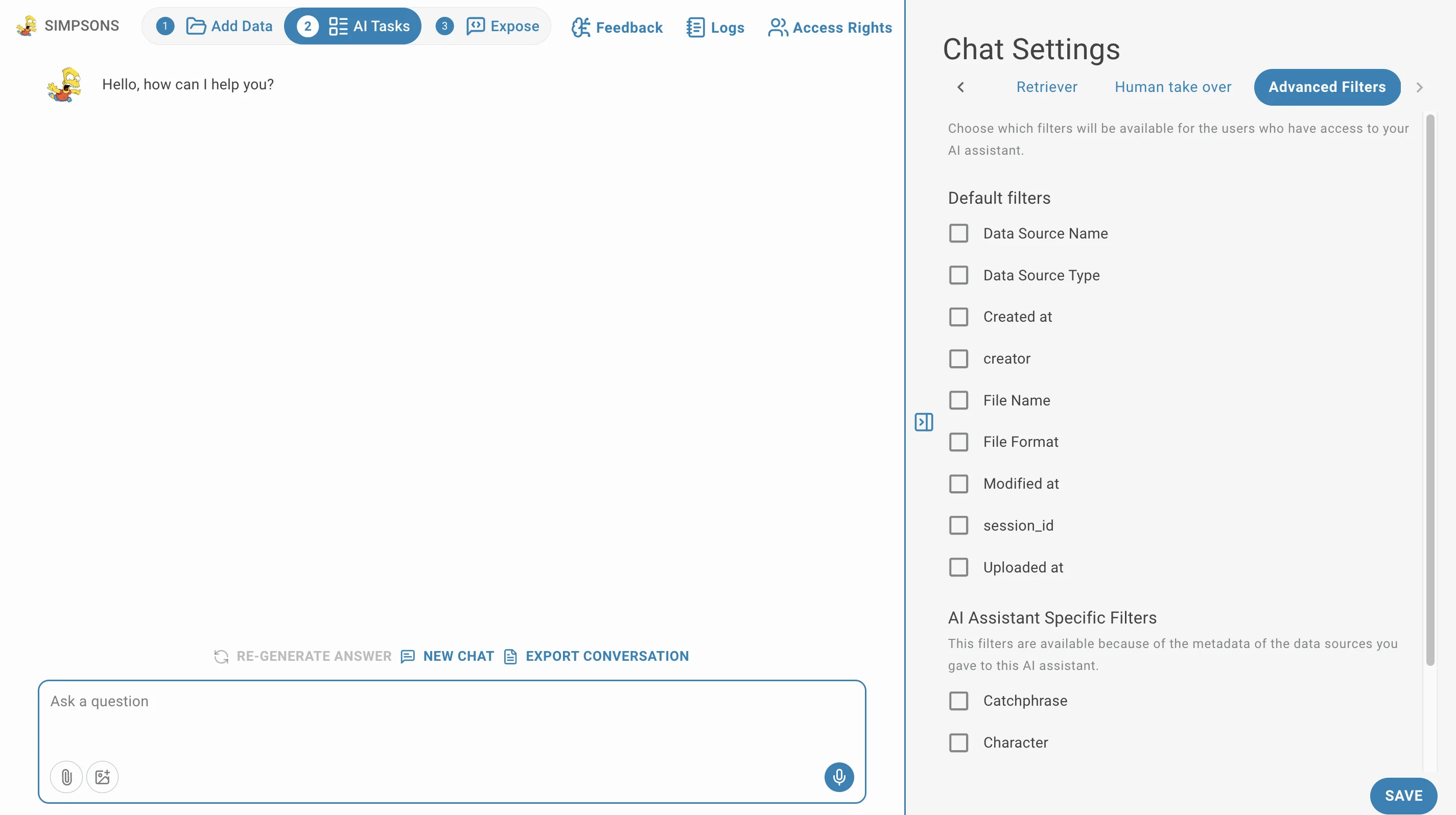

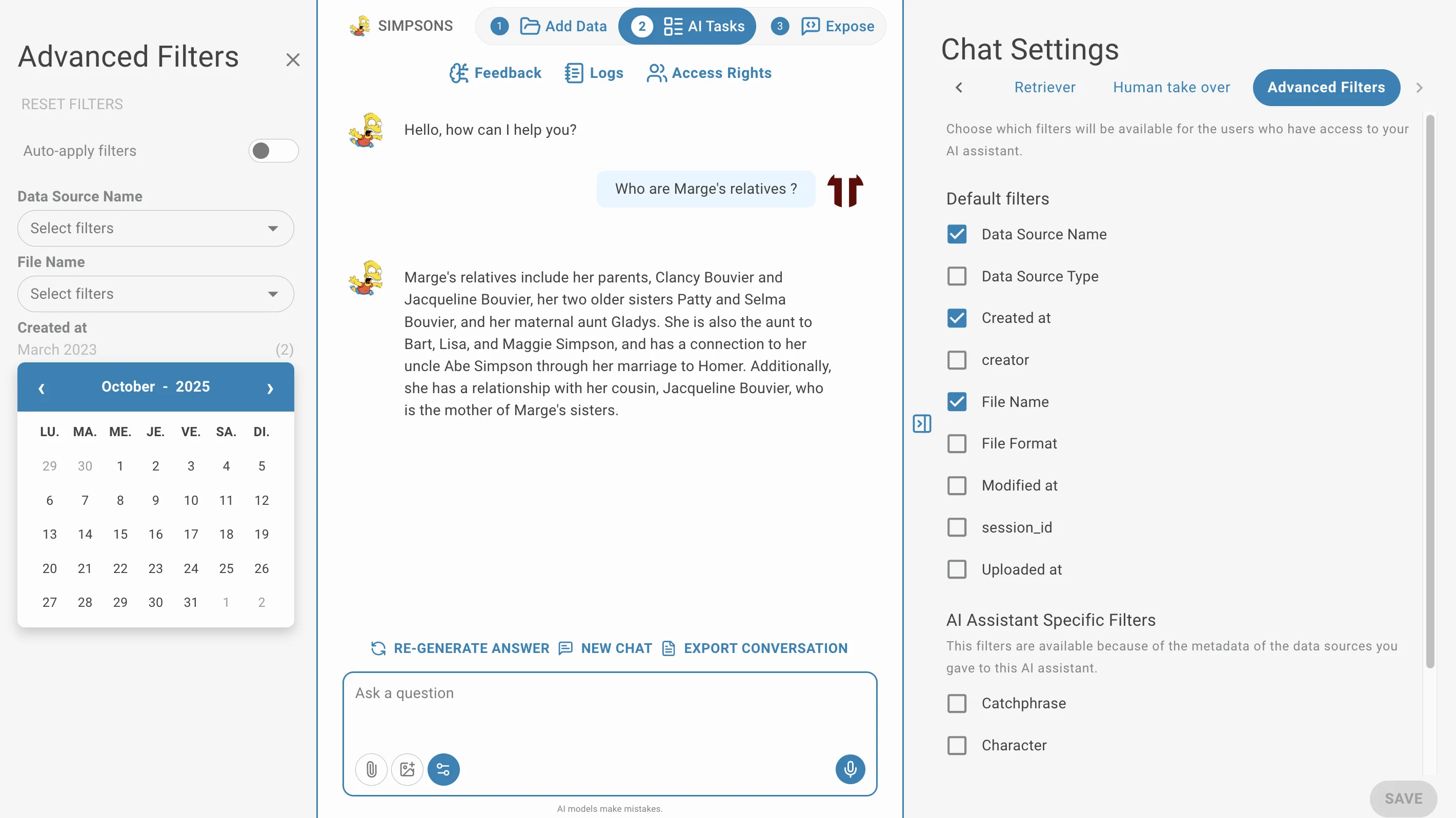

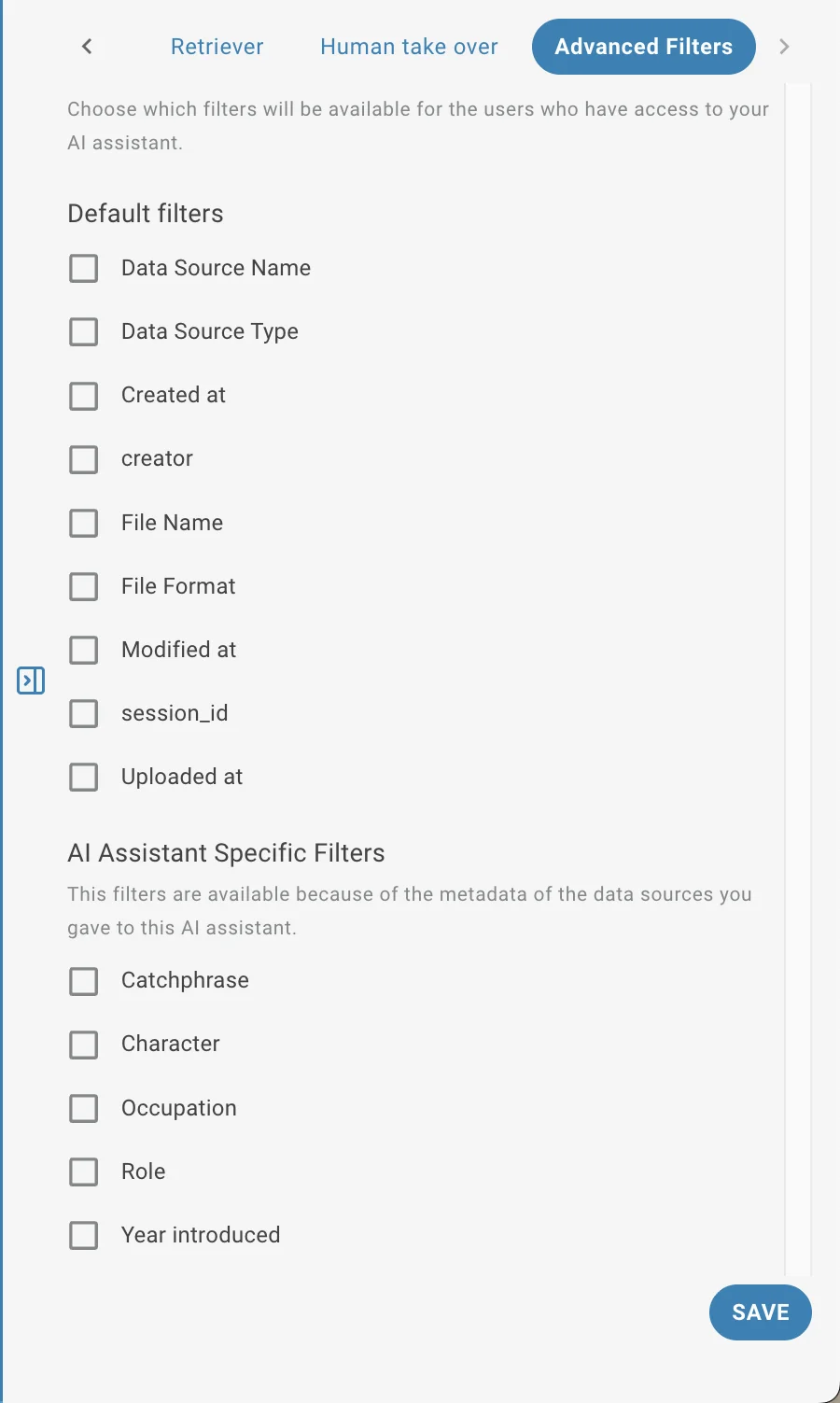

Advanced filters

You can choose to filter the documents used to answer your question by clicking on Advanced filters.

Chat Metadatas

To access Faceted Chat: Go to AI Tasks → Chat. On the right panel, click Chat Settings → Advanced Filters. Enable the filters you want to make available to users.